The python code that goes along with this blog post can be found at https://github.com/Atrix256/InverseDFTProblems

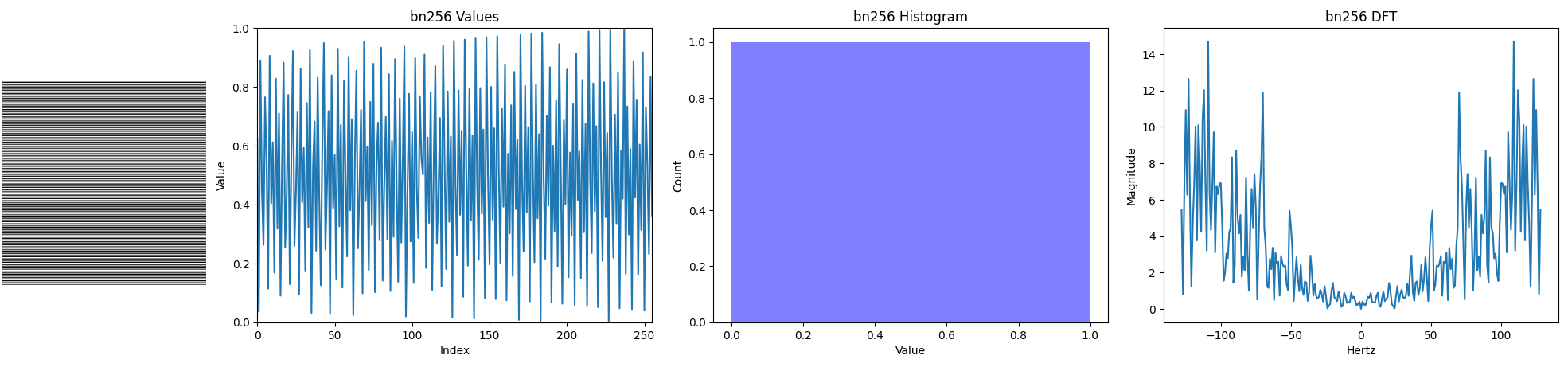

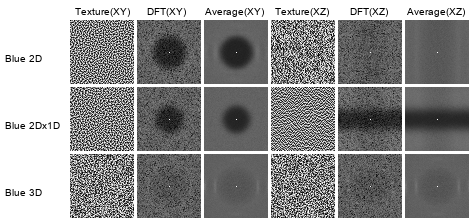

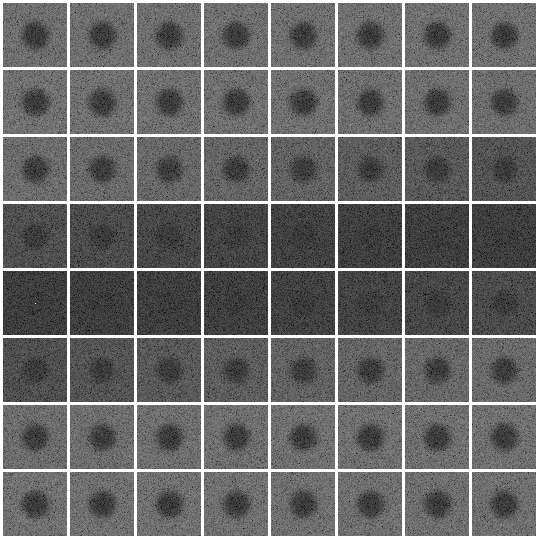

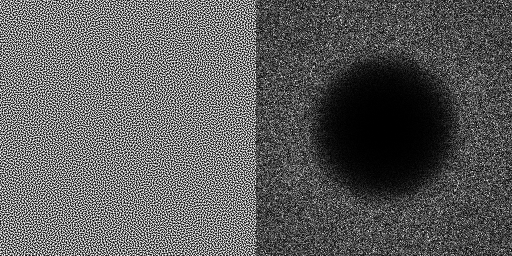

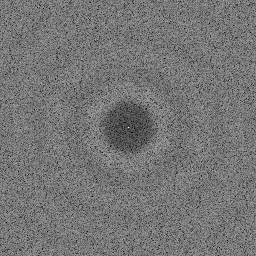

To evaluate the quality of a blue noise texture, you can analyze it in frequency space by taking a discrete Fourier transform. What you want to see is something that looks like tv static (white noise) with a darkened center, like the below. The frequencies in the center are the low frequencies, while the frequencies towards the edges are the high frequencies. This DFT shows high frequency randomness without any low frequency content, which is what blue noise is.

A common question then is: why can’t you just make what you want in frequency space and do an inverse Fourier transform to get the noise out you want? This could let you make all sorts of custom crafted types of noise, not just spatial blue noise.

Let’s try that out in 1D and see what happens.

First we make N complex values from polar coordinates that have a random angle 0 to 2pi and a random radius from 0 to 1. These will be the frequencies for our N noise values. We also want to make sure that the + and – frequency bins are complex conjugates of each other so that when we do an IDFT, we’ll get a strictly real valued signal.

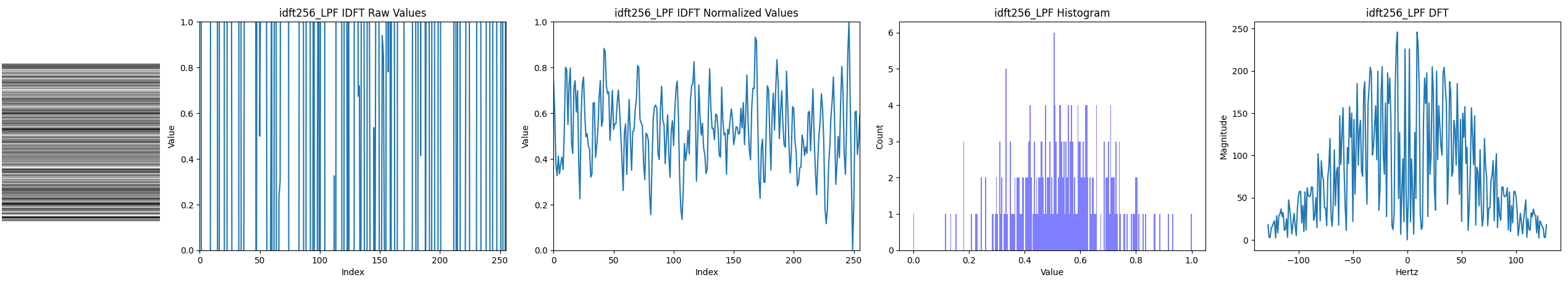

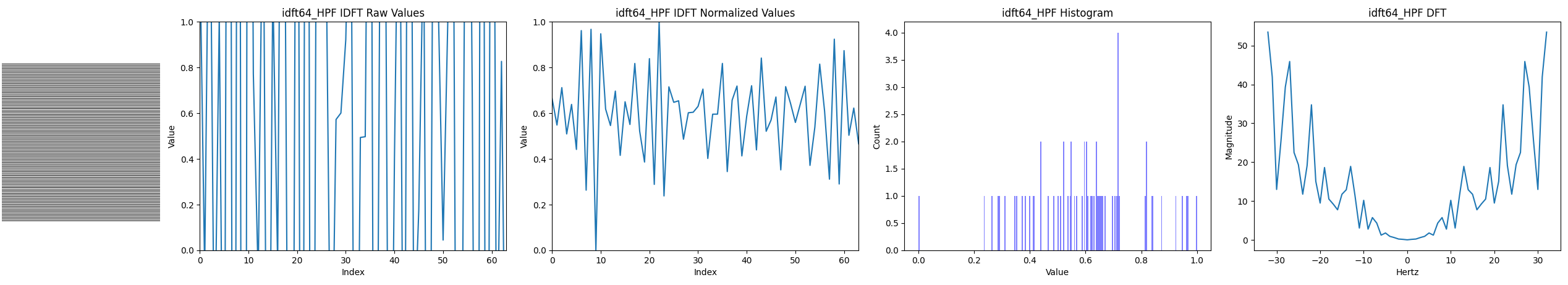

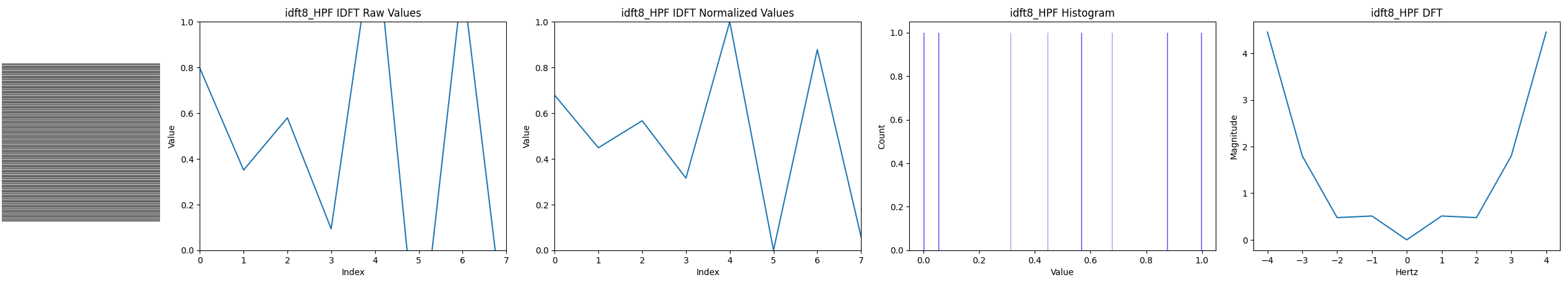

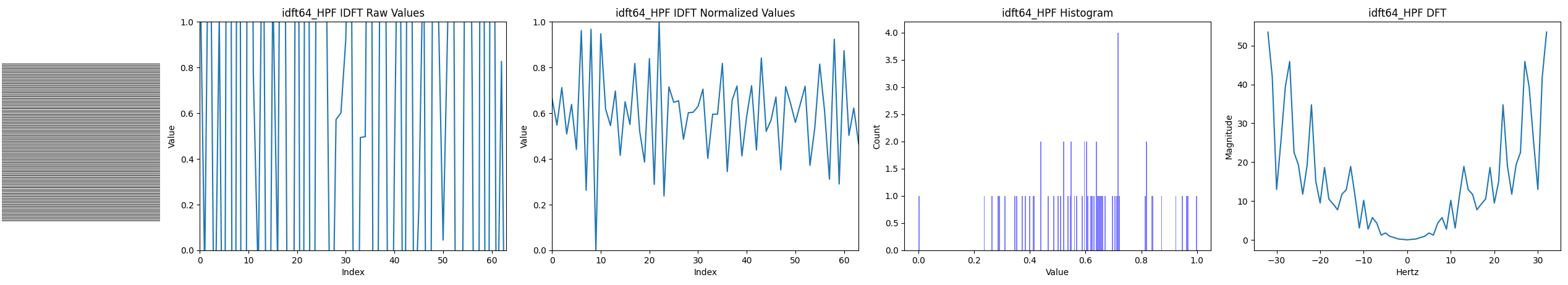

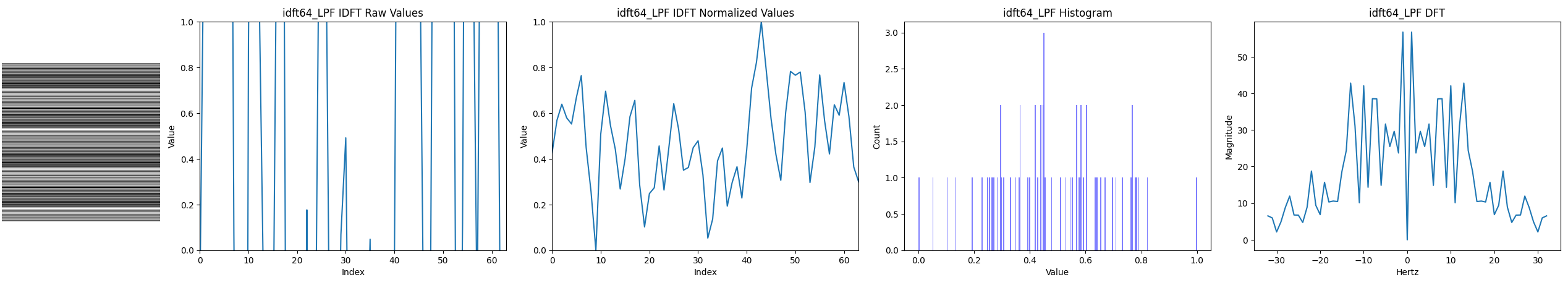

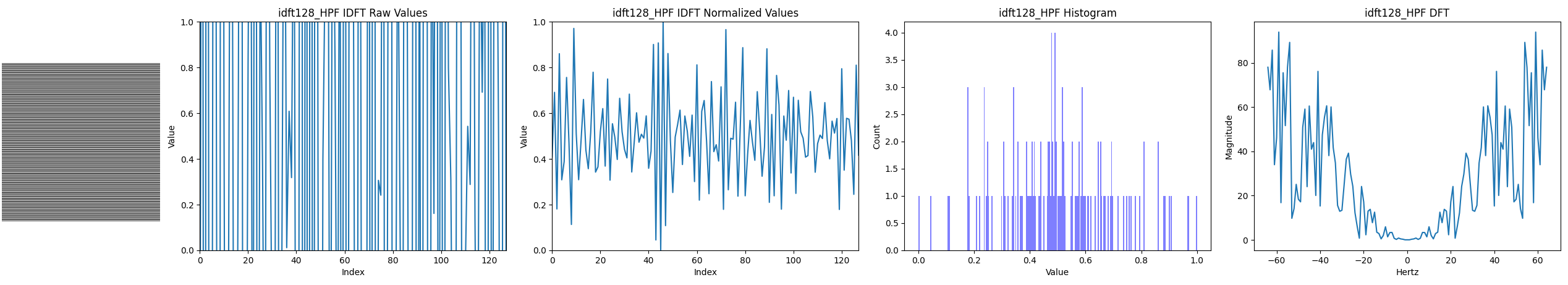

After initializing these frequencies to white noise, we’ll multiply the values by a gaussian kernel to make the values towards the edges smaller. This is a low pass filter since the higher frequencies are reduced and the lower frequencies are mostly left alone. At this point, an IDFT would give us low frequency red noise, so we subtract these frequencies from the original white noise initialized frequencies. This is a high pass filter because the higher frequencies are left alone, while the low frequencies are reduced. At this point, an IDFT would give us high frequency blue noise. (There are a couple other things done, like setting the 0hz DC frequency bucket to a specific value. Check out the python code for more details.) Here is what we get if we do this for 64 noise values (N = 64):

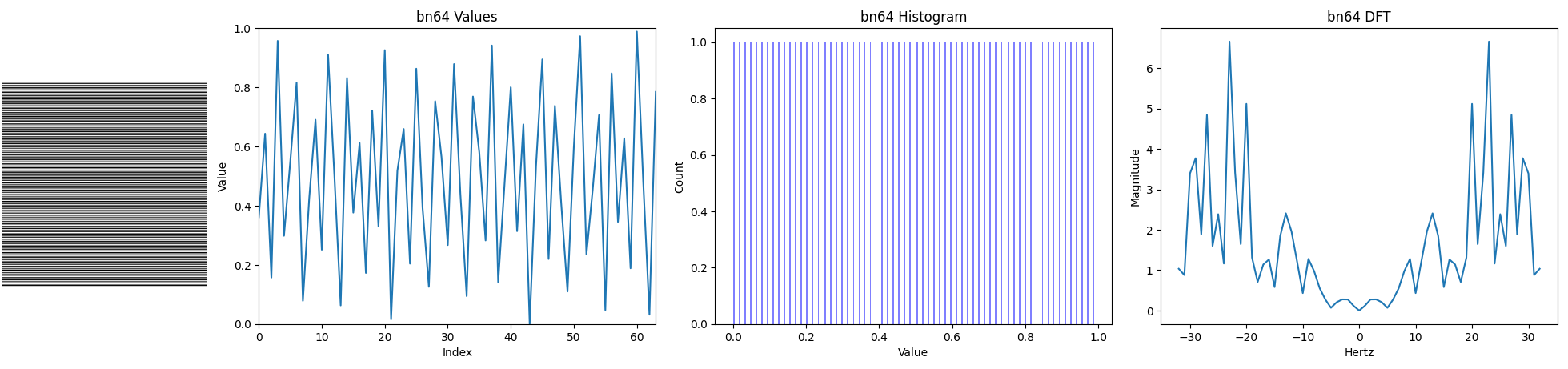

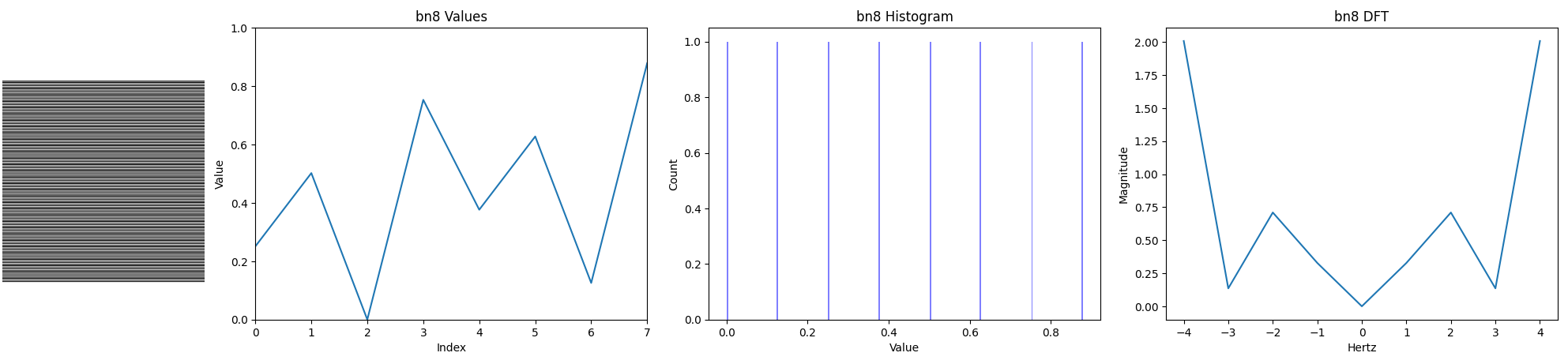

Let’s see how this compares to 64 blue noise values made with the void and cluster algorithm:

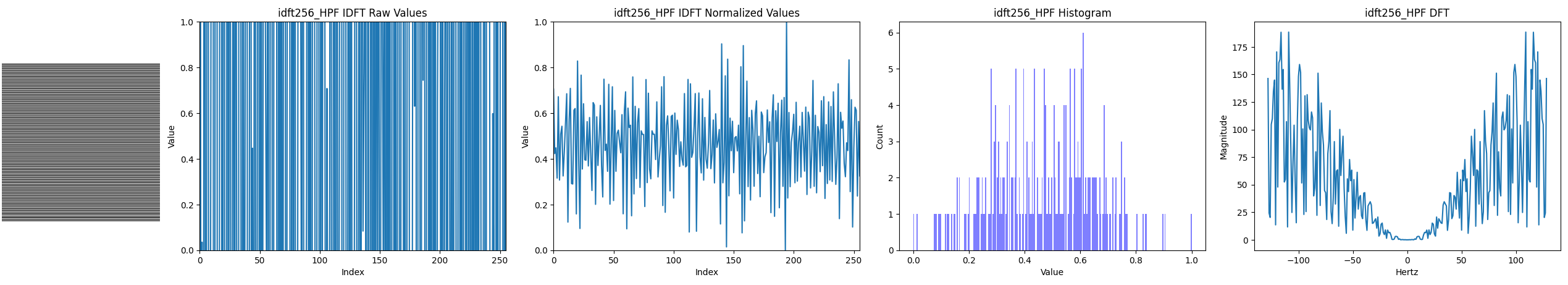

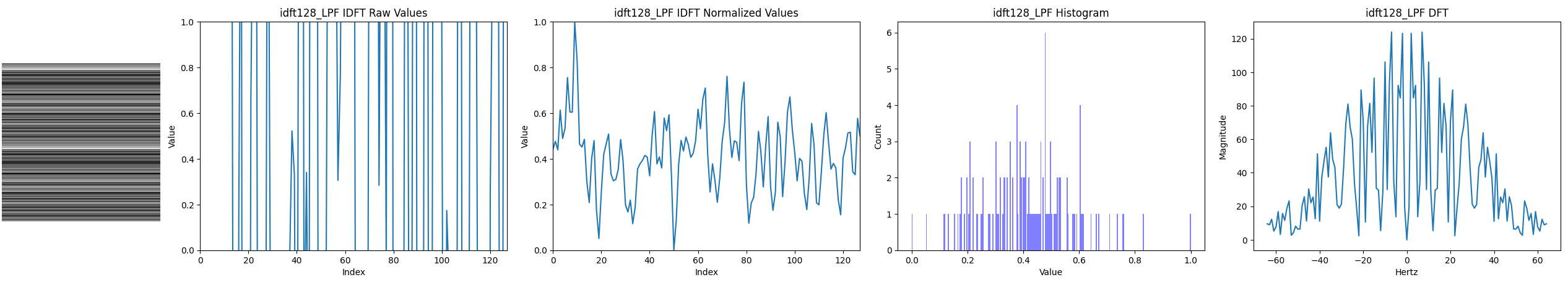

The frequencies in the DFTs (right) look pretty similar but the histogram (2nd from right) from the void and cluster algorithm are much more uniform, and the values (3rd from right) look a lot more even. The output of the IDFT actually gave us the “raw values” shown 2nd from left in the first image, which are out of the [0,1] range, but are scaled and shifted to make the “normalized values” shown next to it.

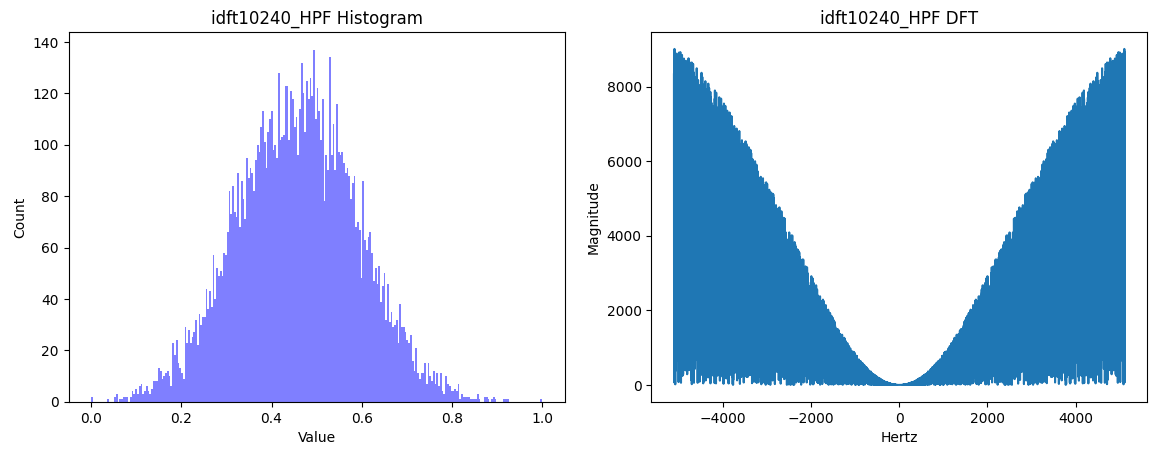

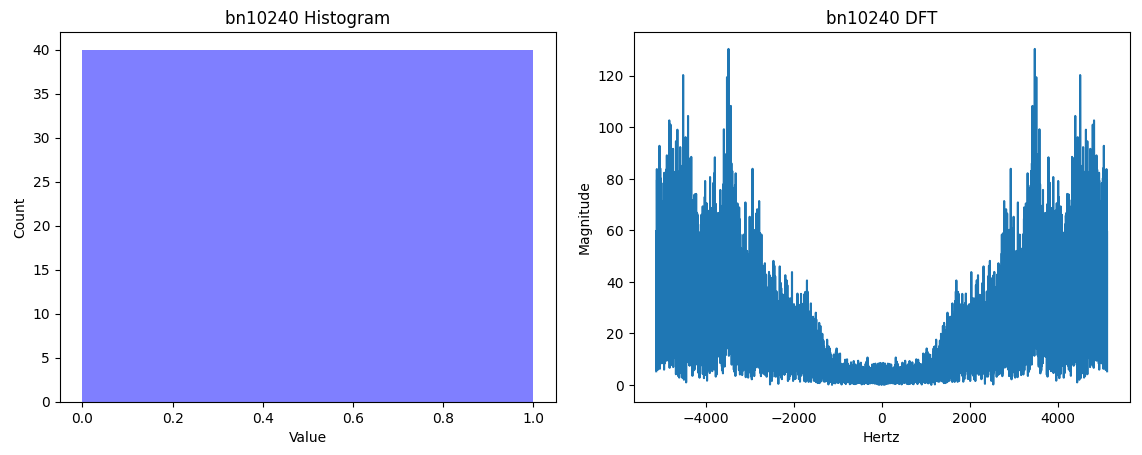

Let’s look at the histogram and DFT for each at 10,240 samples. First is the IDFT method, then void and cluster generated blue noise.

So interestingly, the IDFT method makes noise that is gaussian distributed. This kind of makes sense because we are filling out frequencies as uniform random white noise, which are turning into uniform random white noise sinusoids that are being summed together, which will tend towards a gaussian distribution as you sum up more of them. In contrast, the void and cluster method makes uniform distributed values which are perfectly uniform.

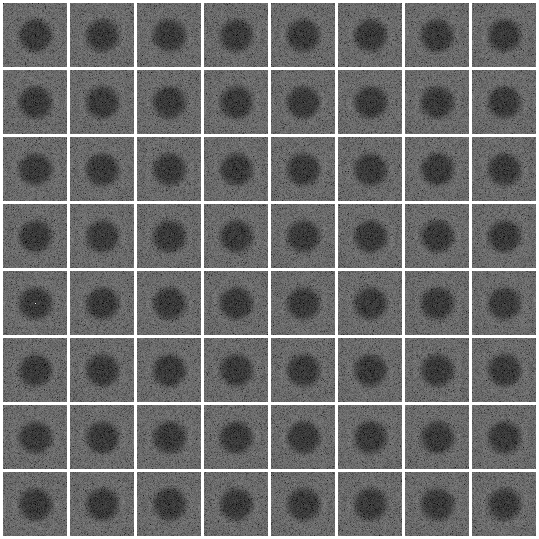

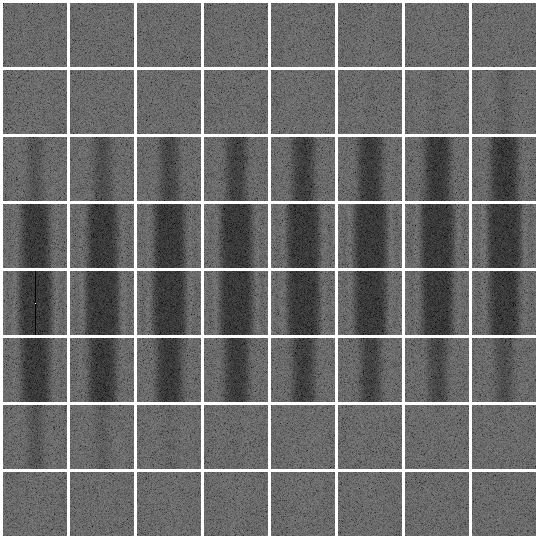

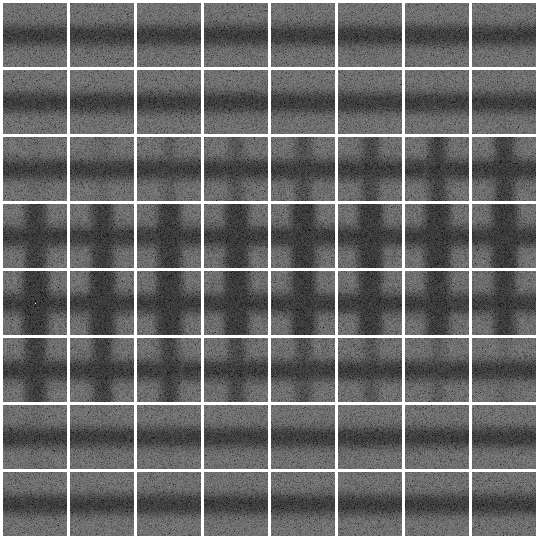

The other interesting difference is that the IDFT method has frequency magnitudes very closely matching a gaussian, while the void and cluster algorithm has distinct valleys. I’ve seen these valleys show up as ripples in DFTs like the below, for 2D blue noise DFTs. It’s unclear to me if the ripples add value to the noise or if they are an imperfect artifact, but seeing as we often see these ripples in DSP (like with sinc), it’s my guess that these probably do add value, but I can’t quantify it.

Most blue noise textures are uniform distributed (we recently put out some work showing how to make them non uniform distributed though: https://github.com/NVIDIAGameWorks/SpatiotemporalBlueNoiseSDK) but if you wanted gaussian distributed blue noise for some reason, maybe this IDFT method would work well for you? Hard to say but it could be interesting to try it out.

This is ultimately what the problem with the IDFT method is though… you get gaussian distributed values, not uniform, and the noise seems to be lower quality as well. If these issues could be solved, or if this noise has value as is, I think that’d be a real interesting and useful result. It would be interesting to then take this to vector valued masks and see if the same could be done there (check out my last post for more info: https://blog.demofox.org/2021/12/27/not-all-blue-noise-is-created-equal-part-2/).

The way I made noise through IDFT may be completely different than what you have in mind, and if so, you may get very different results. I’d love to hear any thoughts. I’m on twitter as @Atrix256.

I wonder if doing gradient descent on histograms and frequency phases could make uniform distributions and higher quality noise? Also, while there is importance in blue noise being actual blue noise (high frequency, better perceptually and designed to be removed with a gaussian blur), there is also importance in the fact that neighboring pixel values are very different from each other. I haven’t seen any methods for generating blue noise that were based on (anti)correlation but I would bet there’s a method waiting to be found there. If you do an auto correlation of a blue noise texture, it shows that pixels have anti correlation with their neighbors, and slight correlation with the neighbors of their neighbors, and even slighter anti correlation with the neighbors of those neighbors and so on. The ripple goes flat pretty quickly, so maybe an algorithm to satisfy those constraints wouldn’t be that difficult or have that long of a run time?

More Data

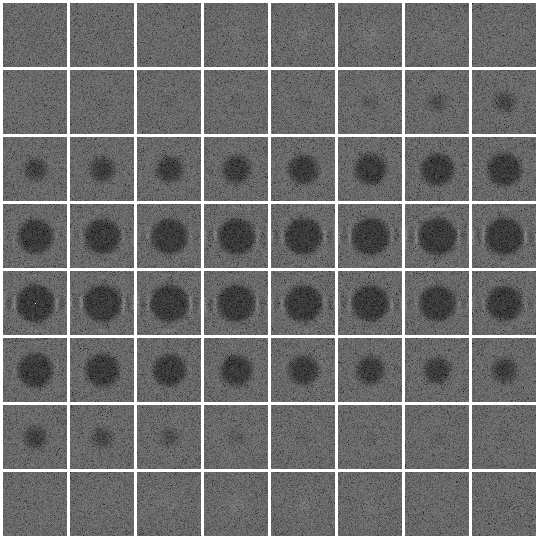

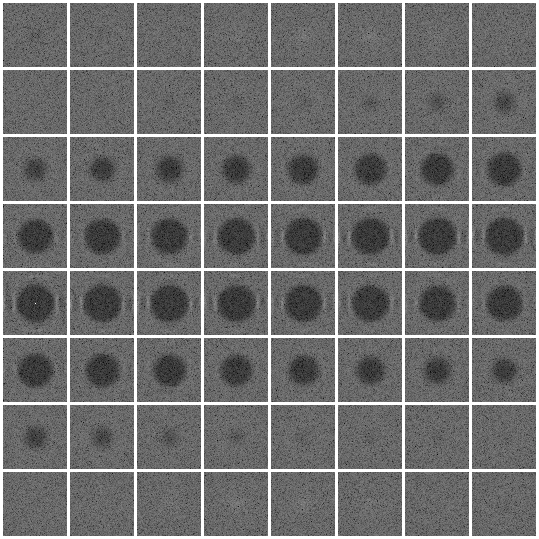

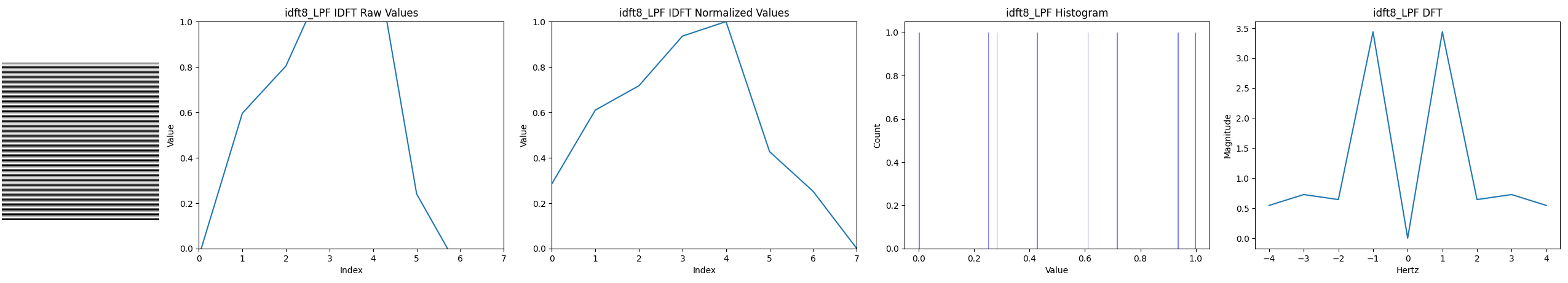

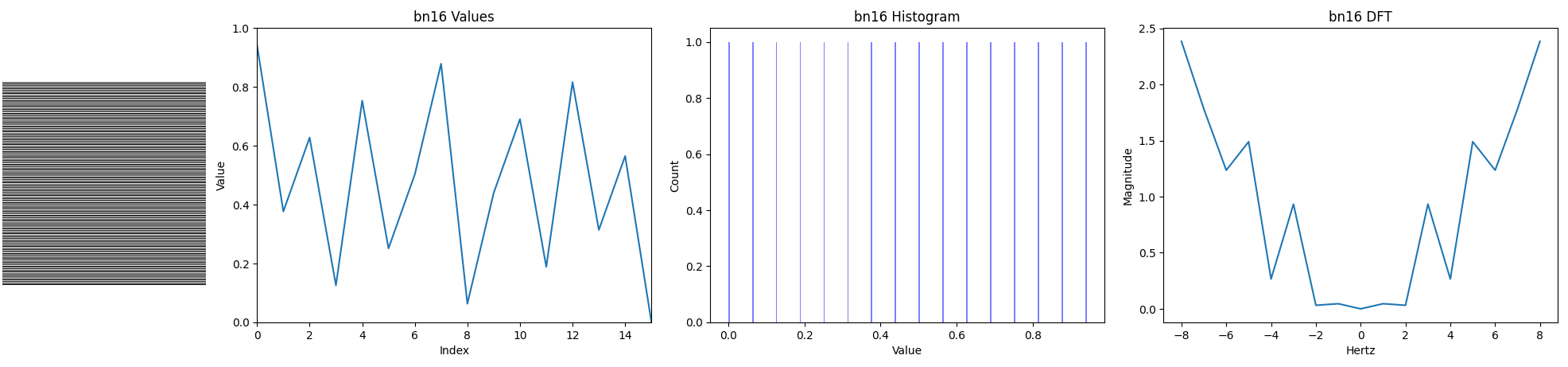

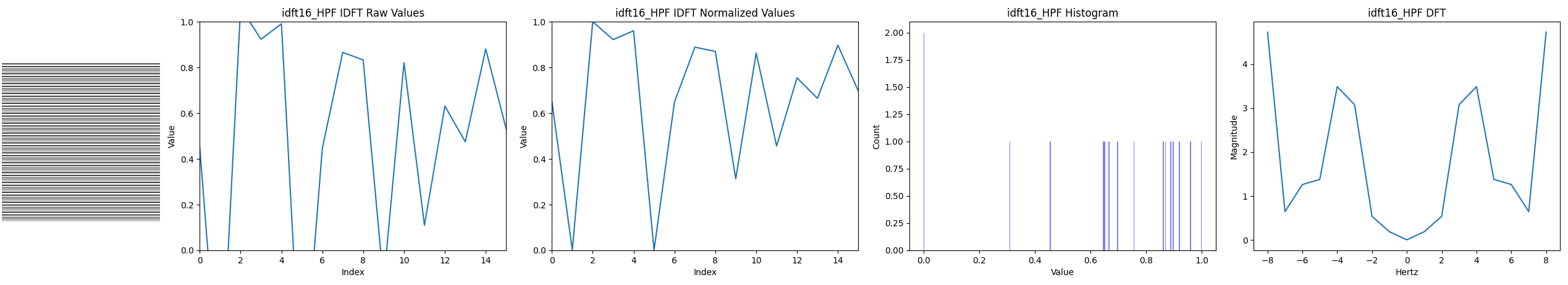

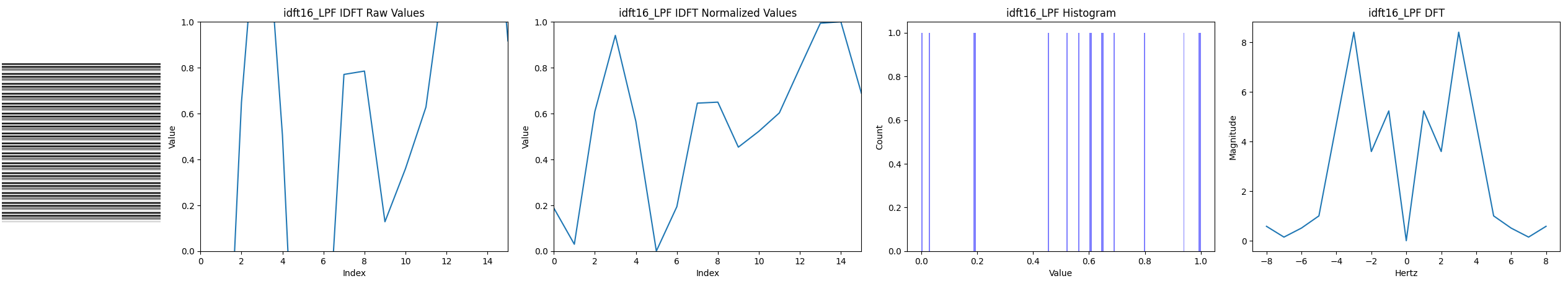

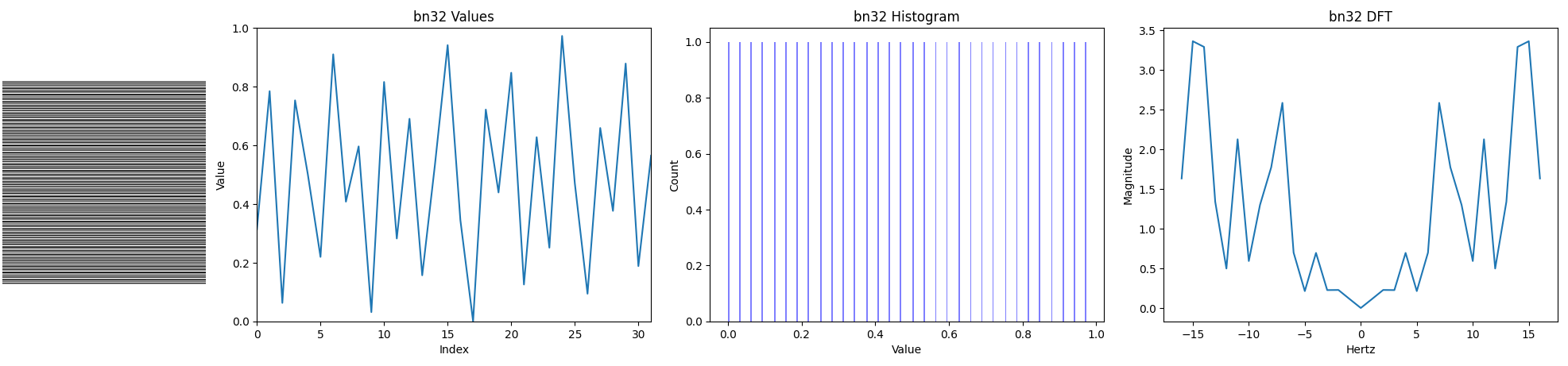

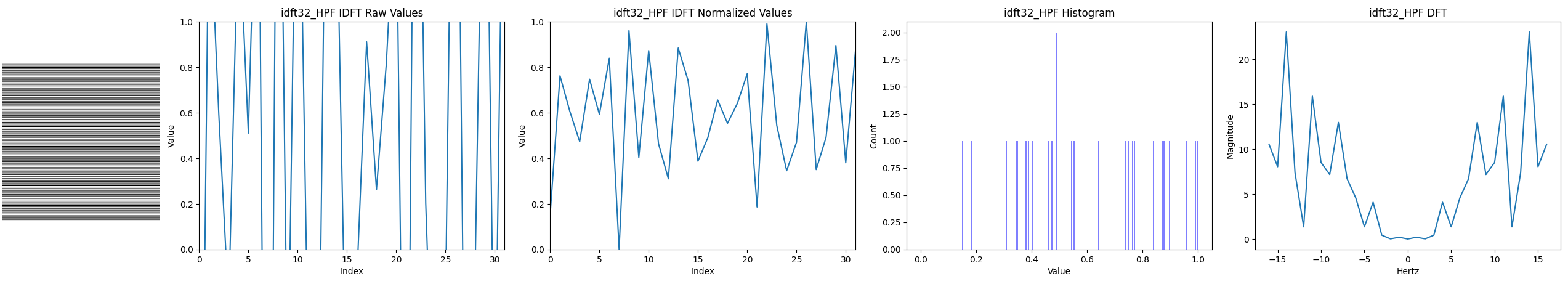

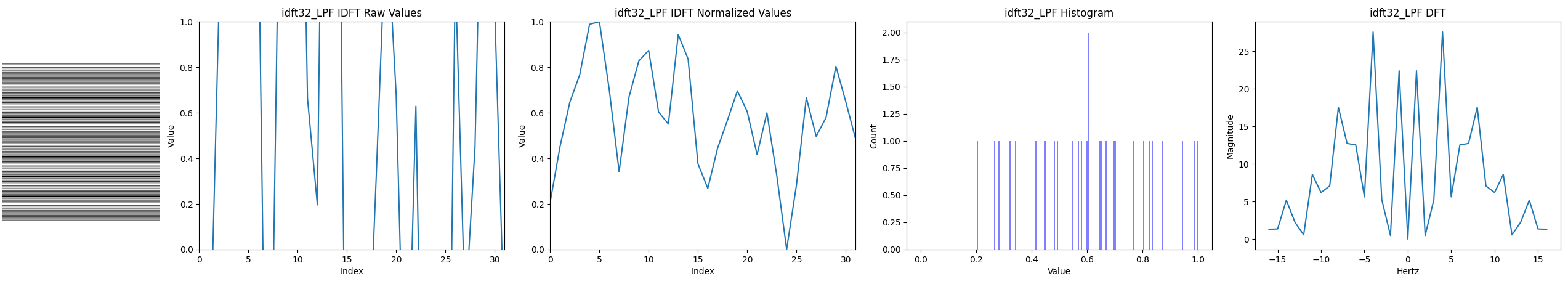

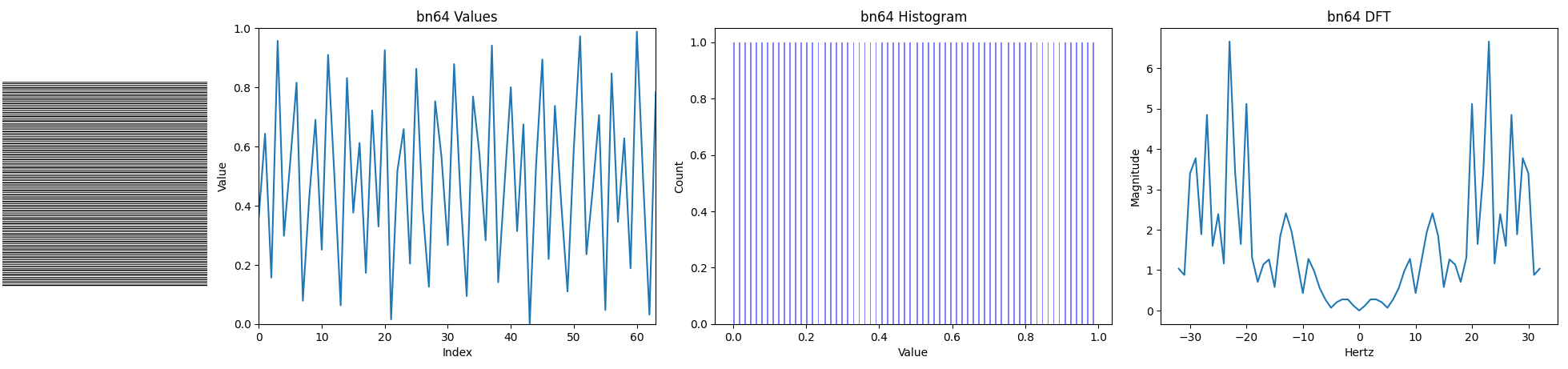

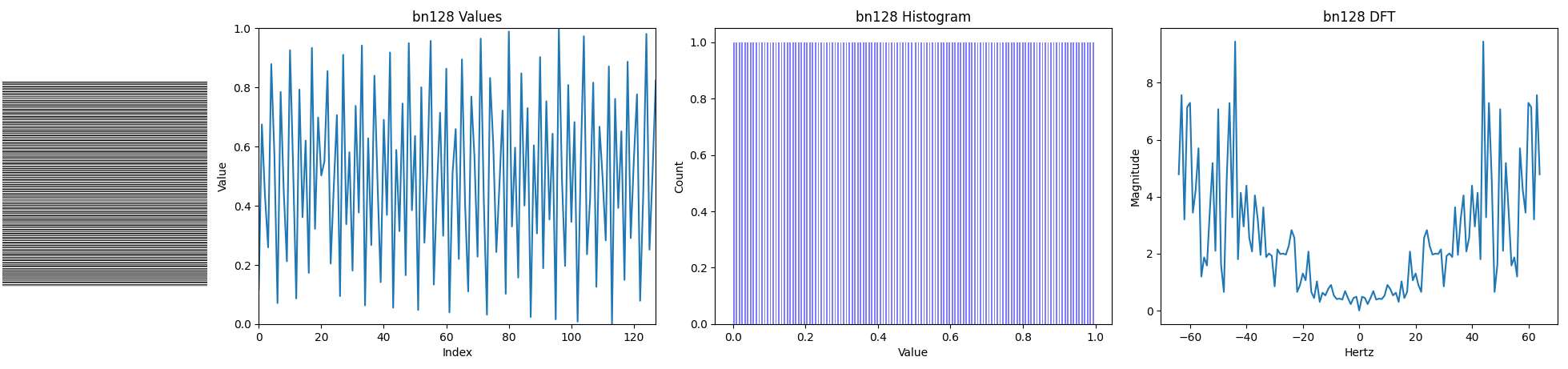

Here are some more comparisons of (1) Void and Cluster blue noise, (2) IDFT high pass filtered white noise to make blue noise, (3) IDFT low pass filtered white noise to make red noise. We’ll compare 8 values, 16, 32, 64, 128 and 256.

8 values:

16 values:

32 values:

64 values:

128 values:

256 values: