This is a part of the DIY Synthesizer series of posts where each post is roughly built upon the knowledge of the previous posts. If you are lost, check the earlier posts!

I just learned about this technique a couple weeks ago and am SUPER stoked to be able to share this info. Honestly, it’s kind of bad ass and a little magical.

If there is a physical location that has the reverb you are looking for (a church, a cave, your bathroom, the subway, whatever), you can go there and record the sound of some short, loud sound (a clap, a starter pistol, whatever). Now that you have this sound file, you can use a mathematical technique called “Discrete Convolution” (not as difficult as it sounds) to apply that reverb to other sounds.

Not only that, you can use convolution to apply various properties of one sound to another sound. Like you could convolve a saw wave and a recording of a voice and make it sound robotic.

This is pretty cool right?

Convolution

There are two types of convolution out there… continuous convolution and discrete convolution.

Continuous convolution is a method where you can take two formulas and perform some operations (algebra, calculus, etc) to get a third formula.

In the cases that us programmers usually work with, however, you usually only have data, and not a mathematical formula describing that data.

When you have specific data points instead of an equation, you can use something called discrete convolution instead.

Discrete convolution is a method where you take two streams of data (IE two arrays of data!) and perform some operations (only looping, addition and multiplication!) to get a third stream of data.

Interestingly, this third stream of data will be the size of the first stream of data, plus the size of the second stream of data, minus one.

This link explains discrete convolution much better than I could, and even has an interactive demo, so check it out: Discrete Convolution

Here is some pseudo code that shows how discrete convolution works.

// We are calculating bufferA * bufferB (discrete convolution)

// initialize out buffer to empty, and make sure it's the right size

outBuffer[sizeof(bufferA)+sizeof(bufferB)-1] = 0

// reverse one of the buffers in preperation of the convolution

reverse(bufferB);

// Convolve!

for (int outBufferIndex = 0; outBufferIndex < sizeof(outBuffer); ++outBufferIndex)

{

bufferAIndex = 0;

bufferBIndex = 0;

if (outBufferIndex < sizeof(bufferB))

bufferBIndex = sizeof(bufferB) - outBufferIndex - 1;

else

bufferAIndex = outBufferIndex - sizeof(bufferB);

for (; bufferAIndex < sizeof(bufferA) && bufferBIndex < sizeof(bufferB); ++bufferAIndex, ++bufferBIndex)

{

outBuffer[outBufferIndex] += bufferA[bufferAIndex] * bufferB[bufferBIndex];

}

}

That's all there is to it!

Convolution Reverb

Like I mentioned above, to do convolution reverb you just convolve the audio file you want to add reverb to with the recorded sound from the place that has the reverb you want. That second sound is called the Impulse Response, or IR for short.

Besides letting you choose a reverb model (like “cavernous” or “small hallway” or “bright tiled room”), many reverbs will have a “wetness” parameter that controls how much reverb you hear versus how much of the origional noise you hear. A wetness of 1.0 means all you hear is the reverb (the convolution), while a wetness of 0.0 means all you hear is the origional sound (the dry sound). It’s just a percentage and literally is just used to lerp between the two signals.

Here is an example of how changes in wetness can sound, and also give you a first glimpse of just how good this technique works!

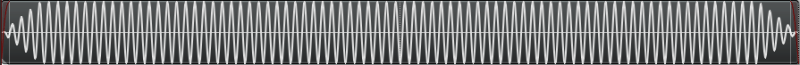

Dry Sound: SoundBite

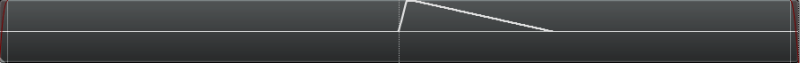

Impulse Response: ReverbLarge

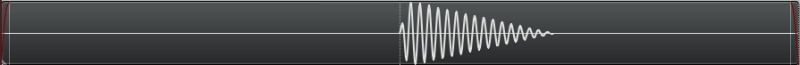

Convolved with 100% wetness: c_RL_SBite.wav

Convolved with 66% wetness: c_RL_SBite_66.wav

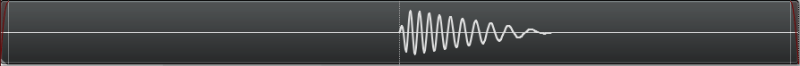

Convolved with 33% wetness: c_RL_SBite_33.wav

Sample Sound Files

Let’s convolve some sounds together and see what results we get!

Here are 11 sounds convoled against each other (and themselves) at 100% wetness so all you hear is the convolution. Convolution is commutative (A*B == B*A) so that cuts down on the number of combinations (66 instead of 121!)

There are 3 impulse responses, 4 short basic wave forms, 2 voice samples a cymbal drum pattern and a jaw harp twang.

Some of the results are pretty interesting, for instance check out SoundBite vs SoundCymbal. The beat of the cymbal pattern remains, but the actual symbal sound itself is replaced by the words!

Sample Code

Here is some sample code which will read in “in.wav” and “reverb.wav”, do convolution on them and save the fully wet result (convolution result only, no original in.wav mixed into the output) as “out.wav”.

Note that this code was used to process the sample sound files above. Usual caveat: The sound loading code isn’t bulletproof. I’ve found it works best with 16 bit mono sound files. If you need better sound loading, check out libsndfile!

#define _CRT_SECURE_NO_WARNINGS

#include

#include

#include

#include

#include

#include

#include

#include

#define _USE_MATH_DEFINES

#include

//=====================================================================================

// SNumeric - uses phantom types to enforce type safety

//=====================================================================================

template

struct SNumeric

{

public:

explicit SNumeric(const T &value) : m_value(value) { }

SNumeric() : m_value() { }

inline T& Value() { return m_value; }

inline const T& Value() const { return m_value; }

typedef SNumeric TType;

typedef T TInnerType;

// Math Operations

TType operator+ (const TType &b) const

{

return TType(this->Value() + b.Value());

}

TType operator- (const TType &b) const

{

return TType(this->Value() - b.Value());

}

TType operator* (const TType &b) const

{

return TType(this->Value() * b.Value());

}

TType operator/ (const TType &b) const

{

return TType(this->Value() / b.Value());

}

TType& operator+= (const TType &b)

{

Value() += b.Value();

return *this;

}

TType& operator-= (const TType &b)

{

Value() -= b.Value();

return *this;

}

TType& operator*= (const TType &b)

{

Value() *= b.Value();

return *this;

}

TType& operator/= (const TType &b)

{

Value() /= b.Value();

return *this;

}

TType& operator++ ()

{

Value()++;

return *this;

}

TType& operator-- ()

{

Value()--;

return *this;

}

// Extended Math Operations

template

T Divide(const TType &b)

{

return ((T)this->Value()) / ((T)b.Value());

}

// Logic Operations

bool operatorValue() < b.Value();

}

bool operatorValue() (const TType &b) const {

return this->Value() > b.Value();

}

bool operator>= (const TType &b) const {

return this->Value() >= b.Value();

}

bool operator== (const TType &b) const {

return this->Value() == b.Value();

}

bool operator!= (const TType &b) const {

return this->Value() != b.Value();

}

private:

T m_value;

};

//=====================================================================================

// Typedefs

//=====================================================================================

typedef uint8_t uint8;

typedef uint16_t uint16;

typedef uint32_t uint32;

typedef int16_t int16;

typedef int32_t int32;

// type safe types!

typedef SNumeric TFrequency;

typedef SNumeric TTimeMs;

typedef SNumeric TSamples;

typedef SNumeric TFractionalSamples;

typedef SNumeric TDecibels;

typedef SNumeric TAmplitude;

typedef SNumeric TPhase;

//=====================================================================================

// Constants

//=====================================================================================

static const float c_pi = (float)M_PI;

static const float c_twoPi = c_pi * 2.0f;

//=====================================================================================

// Structs

//=====================================================================================

struct SSoundSettings

{

TSamples m_sampleRate;

};

//=====================================================================================

// Conversion Functions

//=====================================================================================

inline TDecibels AmplitudeToDB(TAmplitude volume)

{

return TDecibels(log10(volume.Value()));

}

inline TAmplitude DBToAmplitude(TDecibels dB)

{

return TAmplitude(pow(10.0f, dB.Value() / 20.0f));

}

TSamples SecondsToSamples(const SSoundSettings &s, float seconds)

{

return TSamples((int)(seconds * (float)s.m_sampleRate.Value()));

}

TSamples MilliSecondsToSamples(const SSoundSettings &s, float milliseconds)

{

return SecondsToSamples(s, milliseconds / 1000.0f);

}

TTimeMs SecondsToMilliseconds(float seconds)

{

return TTimeMs((uint32)(seconds * 1000.0f));

}

TFrequency Frequency(float octave, float note)

{

/* frequency = 440×(2^(n/12))

Notes:

0 = A

1 = A#

2 = B

3 = C

4 = C#

5 = D

6 = D#

7 = E

8 = F

9 = F#

10 = G

11 = G# */

return TFrequency((float)(440 * pow(2.0, ((double)((octave - 4) * 12 + note)) / 12.0)));

}

template

T AmplitudeToAudioSample(const TAmplitude& in)

{

const T c_min = std::numeric_limits::min();

const T c_max = std::numeric_limits::max();

const float c_minFloat = (float)c_min;

const float c_maxFloat = (float)c_max;

float ret = in.Value() * c_maxFloat;

if (ret c_maxFloat)

return c_max;

return (T)ret;

}

TAmplitude GetLerpedAudioSample(const std::vector& samples, TFractionalSamples& index)

{

// get the index of each sample and the fractional blend amount

uint32 a = (uint32)floor(index.Value());

uint32 b = a + 1;

float fract = index.Value() - floor(index.Value());

// get our two amplitudes

float ampA = 0.0f;

if (a >= 0 && a = 0 && b < samples.size())

ampB = samples[b].Value();

// return the lerped result

return TAmplitude(fract * ampB + (1.0f - fract) * ampA);

}

void NormalizeSamples(std::vector& samples, TAmplitude maxAmplitude, TSamples envelopeTimeFrontBack)

{

// nothing to do if no samples

if (samples.size() == 0)

return;

// 1) find the largest absolute value in the samples.

TAmplitude largestAbsVal = TAmplitude(abs(samples.front().Value()));

std::for_each(samples.begin() + 1, samples.end(), [&largestAbsVal](const TAmplitude &a)

{

TAmplitude absVal = TAmplitude(abs(a.Value()));

if (absVal > largestAbsVal)

largestAbsVal = absVal;

}

);

// 2) adjust largestAbsVal so that when we divide all samples, none will be bigger than maxAmplitude

// if the value we are going to divide by is <= 0, bail out

largestAbsVal /= maxAmplitude;

if (largestAbsVal <= TAmplitude(0.0f))

return;

// 3) divide all numbers by the largest absolute value seen so all samples are [-maxAmplitude,+maxAmplitude]

// also apply front and back envelope

const TSamples c_frontEnvelopeEnd(envelopeTimeFrontBack);

const TSamples c_backEnvelopeStart(samples.size() - envelopeTimeFrontBack.Value());

for (TSamples index(0), numSamples(samples.size()); index < numSamples; ++index)

{

// calculate envelope

TAmplitude envelope(1.0f);

if (index < c_frontEnvelopeEnd)

envelope = TAmplitude(index.Divide(envelopeTimeFrontBack));

else if (index > c_backEnvelopeStart)

envelope = TAmplitude(1.0f) - TAmplitude((index - c_backEnvelopeStart).Divide(envelopeTimeFrontBack));

// apply envelope while normalizing

samples[index.Value()] = samples[index.Value()] * envelope / largestAbsVal;

}

}

void ResampleData(std::vector& samples, int srcSampleRate, int destSampleRate)

{

//if the requested sample rate is the sample rate it already is, bail out and do nothing

if (srcSampleRate == destSampleRate)

return;

//calculate the ratio of the old sample rate to the new

float fResampleRatio = (float)destSampleRate / (float)srcSampleRate;

//calculate how many samples the new data will have and allocate the new sample data

int nNewDataNumSamples = (int)((float)samples.size() * fResampleRatio);

std::vector newSamples;

newSamples.resize(nNewDataNumSamples);

//get each lerped output sample. There are higher quality ways to resample

for (int nIndex = 0; nIndex < nNewDataNumSamples; ++nIndex)

newSamples[nIndex] = GetLerpedAudioSample(samples, TFractionalSamples((float)nIndex / fResampleRatio));

//free the old data and set the new data

std::swap(samples, newSamples);

}

void ChangeNumChannels(std::vector& samples, int nSrcChannels, int nDestChannels)

{

//if the number of channels requested is the number of channels already there, or either number of channels is not mono or stereo, return

if (nSrcChannels == nDestChannels ||

nSrcChannels 2 ||

nDestChannels 2)

{

return;

}

//if converting from mono to stereo, duplicate the mono channel to make stereo

if (nDestChannels == 2)

{

std::vector newSamples;

newSamples.resize(samples.size() * 2);

for (size_t index = 0; index < samples.size(); ++index)

{

newSamples[index * 2] = samples[index];

newSamples[index * 2 + 1] = samples[index];

}

std::swap(samples, newSamples);

}

//else converting from stereo to mono, mix the stereo channels together to make mono

else

{

std::vector newSamples;

newSamples.resize(samples.size() / 2);

for (size_t index = 0; index < samples.size() / 2; ++index)

newSamples[index] = samples[index * 2] + samples[index * 2 + 1];

std::swap(samples, newSamples);

}

}

float PCMToFloat(unsigned char *pPCMData, int nNumBytes)

{

switch (nNumBytes)

{

case 1:

{

uint8 data = pPCMData[0];

return (float)data / 255.0f;

}

case 2:

{

int16 data = pPCMData[1] << 8 | pPCMData[0];

return ((float)data) / ((float)0x00007fff);

}

case 3:

{

int32 data = pPCMData[2] << 16 | pPCMData[1] << 8 | pPCMData[0];

return ((float)data) / ((float)0x007fffff);

}

case 4:

{

int32 data = pPCMData[3] << 24 | pPCMData[2] << 16 | pPCMData[1] << 8 | pPCMData[0];

return ((float)data) / ((float)0x7fffffff);

}

default:

{

return 0.0f;

}

}

}

//=====================================================================================

// Wave File Writing Code

//=====================================================================================

struct SMinimalWaveFileHeader

{

//the main chunk

unsigned char m_szChunkID[4]; //0

uint32 m_nChunkSize; //4

unsigned char m_szFormat[4]; //8

//sub chunk 1 "fmt "

unsigned char m_szSubChunk1ID[4]; //12

uint32 m_nSubChunk1Size; //16

uint16 m_nAudioFormat; //18

uint16 m_nNumChannels; //20

uint32 m_nSampleRate; //24

uint32 m_nByteRate; //28

uint16 m_nBlockAlign; //30

uint16 m_nBitsPerSample; //32

//sub chunk 2 "data"

unsigned char m_szSubChunk2ID[4]; //36

uint32 m_nSubChunk2Size; //40

//then comes the data!

};

//this writes a wave file

template

bool WriteWaveFile(const char *fileName, const std::vector &samples, const SSoundSettings &sound)

{

//open the file if we can

FILE *file = fopen(fileName, "w+b");

if (!file)

return false;

//calculate bits per sample and the data size

const int32 bitsPerSample = sizeof(T) * 8;

const int dataSize = samples.size() * sizeof(T);

SMinimalWaveFileHeader waveHeader;

//fill out the main chunk

memcpy(waveHeader.m_szChunkID, "RIFF", 4);

waveHeader.m_nChunkSize = dataSize + 36;

memcpy(waveHeader.m_szFormat, "WAVE", 4);

//fill out sub chunk 1 "fmt "

memcpy(waveHeader.m_szSubChunk1ID, "fmt ", 4);

waveHeader.m_nSubChunk1Size = 16;

waveHeader.m_nAudioFormat = 1;

waveHeader.m_nNumChannels = 1;

waveHeader.m_nSampleRate = sound.m_sampleRate.Value();

waveHeader.m_nByteRate = sound.m_sampleRate.Value() * 1 * bitsPerSample / 8;

waveHeader.m_nBlockAlign = 1 * bitsPerSample / 8;

waveHeader.m_nBitsPerSample = bitsPerSample;

//fill out sub chunk 2 "data"

memcpy(waveHeader.m_szSubChunk2ID, "data", 4);

waveHeader.m_nSubChunk2Size = dataSize;

//write the header

fwrite(&waveHeader, sizeof(SMinimalWaveFileHeader), 1, file);

//write the wave data itself, converting it from float to the type specified

std::vector outSamples;

outSamples.resize(samples.size());

for (size_t index = 0; index < samples.size(); ++index)

outSamples[index] = AmplitudeToAudioSample(samples[index]);

fwrite(&outSamples[0], dataSize, 1, file);

//close the file and return success

fclose(file);

return true;

}

//loads a wave file in. Converts from source format into the specified format

// TOTAL HONESTY: some wave files seem to have problems being loaded through this function and I don't have

// time to investigate why. It seems to work best with 16 bit mono wave files.

// If you need more robust file loading, check out libsndfile at http://www.mega-nerd.com/libsndfile/

bool ReadWaveFile(const char *fileName, std::vector& samples, int32 sampleRate)

{

//open the file if we can

FILE *File = fopen(fileName, "rb");

if (!File)

{

return false;

}

//read the main chunk ID and make sure it's "RIFF"

char buffer[5];

buffer[4] = 0;

if (fread(buffer, 4, 1, File) != 1 || strcmp(buffer, "RIFF"))

{

fclose(File);

return false;

}

//read the main chunk size

uint32 nChunkSize;

if (fread(&nChunkSize, 4, 1, File) != 1)

{

fclose(File);

return false;

}

//read the format and make sure it's "WAVE"

if (fread(buffer, 4, 1, File) != 1 || strcmp(buffer, "WAVE"))

{

fclose(File);

return false;

}

long chunkPosFmt = -1;

long chunkPosData = -1;

while (chunkPosFmt == -1 || chunkPosData == -1)

{

//read a sub chunk id and a chunk size if we can

if (fread(buffer, 4, 1, File) != 1 || fread(&nChunkSize, 4, 1, File) != 1)

{

fclose(File);

return false;

}

//if we hit a fmt

if (!strcmp(buffer, "fmt "))

{

chunkPosFmt = ftell(File) - 8;

}

//else if we hit a data

else if (!strcmp(buffer, "data"))

{

chunkPosData = ftell(File) - 8;

}

//skip to the next chunk

fseek(File, nChunkSize, SEEK_CUR);

}

//we'll use this handy struct to load in

SMinimalWaveFileHeader waveData;

//load the fmt part if we can

fseek(File, chunkPosFmt, SEEK_SET);

if (fread(&waveData.m_szSubChunk1ID, 24, 1, File) != 1)

{

fclose(File);

return false;

}

//load the data part if we can

fseek(File, chunkPosData, SEEK_SET);

if (fread(&waveData.m_szSubChunk2ID, 8, 1, File) != 1)

{

fclose(File);

return false;

}

//verify a couple things about the file data

if (waveData.m_nAudioFormat != 1 || //only pcm data

waveData.m_nNumChannels 2 || //must not have more than 2

waveData.m_nBitsPerSample > 32 || //32 bits per sample max

waveData.m_nBitsPerSample % 8 != 0 || //must be a multiple of 8 bites

waveData.m_nBlockAlign > 8) //blocks must be 8 bytes or lower

{

fclose(File);

return false;

}

//figure out how many samples and blocks there are total in the source data

int nBytesPerBlock = waveData.m_nBlockAlign;

int nNumBlocks = waveData.m_nSubChunk2Size / nBytesPerBlock;

int nNumSourceSamples = nNumBlocks * waveData.m_nNumChannels;

//allocate space for the source samples

samples.resize(nNumSourceSamples);

//maximum size of a block is 8 bytes. 4 bytes per samples, 2 channels

unsigned char pBlockData[8];

memset(pBlockData, 0, 8);

//read in the source samples at whatever sample rate / number of channels it might be in

int nBytesPerSample = nBytesPerBlock / waveData.m_nNumChannels;

for (int nIndex = 0; nIndex = TPhase(1.0f))

phase -= TPhase(1.0f);

while (phase 0.5f ? 1.0f : -1.0f);

}

TAmplitude AdvanceOscilator_Triangle(TPhase &phase, TFrequency frequency, TSamples sampleRate)

{

AdvancePhase(phase, frequency, sampleRate);

if (phase > TPhase(0.5f))

return TAmplitude((((1.0f - phase.Value()) * 2.0f) * 2.0f) - 1.0f);

else

return TAmplitude(((phase.Value() * 2.0f) * 2.0f) - 1.0f);

}

TAmplitude AdvanceOscilator_Saw_BandLimited(TPhase &phase, TFrequency frequency, TSamples sampleRate)

{

AdvancePhase(phase, frequency, sampleRate);

// sum the harmonics

TAmplitude ret(0.0f);

for (int harmonicIndex = 1; harmonicIndex <= 4; ++harmonicIndex)

{

TPhase harmonicPhase = phase * TPhase((float)harmonicIndex);

ret += TAmplitude(sin(harmonicPhase.Value()*c_twoPi) / (float)harmonicIndex);

}

//adjust the volume

ret *= TAmplitude(2.0f / c_pi);

return ret;

}

TAmplitude AdvanceOscilator_Square_BandLimited(TPhase &phase, TFrequency frequency, TSamples sampleRate)

{

AdvancePhase(phase, frequency, sampleRate);

// sum the harmonics

TAmplitude ret(0.0f);

for (int harmonicIndex = 1; harmonicIndex <= 4; ++harmonicIndex)

{

float harmonicFactor = (float)harmonicIndex * 2.0f - 1.0f;

TPhase harmonicPhase = phase * TPhase(harmonicFactor);

ret += TAmplitude(sin(harmonicPhase.Value()*c_twoPi) / harmonicFactor);

}

//adjust the volume

ret *= TAmplitude(4.0f / c_pi);

return ret;

}

TAmplitude AdvanceOscilator_Triangle_BandLimited(TPhase &phase, TFrequency frequency, TSamples sampleRate)

{

AdvancePhase(phase, frequency, sampleRate);

// sum the harmonics

TAmplitude ret(0.0f);

TAmplitude signFlip(1.0f);

for (int harmonicIndex = 1; harmonicIndex <= 4; ++harmonicIndex)

{

float harmonicFactor = (float)harmonicIndex * 2.0f - 1.0f;

TPhase harmonicPhase = phase * TPhase(harmonicFactor);

ret += TAmplitude(sin(harmonicPhase.Value()*c_twoPi) / (harmonicFactor*harmonicFactor)) * signFlip;

signFlip *= TAmplitude(-1.0f);

}

//adjust the volume

ret *= TAmplitude(8.0f / (c_pi*c_pi));

return ret;

}

//=====================================================================================

// Main

//=====================================================================================

int main(int argc, char **argv)

{

// wetness parameter of reverb. It's a percentage from 0 to 1.

TAmplitude c_reverbWetness(1.0f);

// keep track of when the process started so we can report how long it took

auto start = std::chrono::system_clock::now().time_since_epoch();

//our desired sound parameters

SSoundSettings sound;

sound.m_sampleRate = TSamples(44100);

// load the input wave file if we can and normalize it

std::vector inputData;

if (!ReadWaveFile("in.wav", inputData, sound.m_sampleRate.Value()))

{

printf("could not load input wave file!");

return 0;

}

NormalizeSamples(inputData, TAmplitude(1.0f), TSamples(0));

// load the reverb wave file if we can and normalize it

std::vector reverbData;

if (!ReadWaveFile("reverb.wav", reverbData, sound.m_sampleRate.Value()))

{

printf("could not load reverb wave file!");

return 0;

}

NormalizeSamples(reverbData, TAmplitude(1.0f), TSamples(0));

// allocate space for the output file - which will be the number of samples in the two input files minus 1

// initialize it to silence!

std::vector samples;

samples.resize(inputData.size() + reverbData.size() - 1);

std::fill(samples.begin(), samples.end(), TAmplitude(0.0f));

// reverse the reverb data in preparation of convolution

std::reverse(reverbData.begin(), reverbData.end());

// report the number of samples of each file

printf("Input samples = %un", inputData.size());

printf("Reverb samples = %un", reverbData.size());

// apply the convolution for each output sample

float lastPercentageReported = 0.0f;

char percentageText[512];

percentageText[0] = 0;

printf("Progress: ");

for (TSamples sampleIndex(0), numSamples(samples.size()); sampleIndex < numSamples; ++sampleIndex)

{

// print some periodic output since this can take a while!

float percentage = sampleIndex.Divide(numSamples);

if (percentage >= lastPercentageReported + 0.01f)

{

// erase the last progress text

for (int i = 0, c = strlen(percentageText); i < c; ++i)

printf("%c %c", 8, 8);

// calculte and show progress text

lastPercentageReported = percentage;

auto duration = std::chrono::system_clock::now().time_since_epoch() - start;

auto millis = std::chrono::duration_cast(duration).count();

float expectedMillis = millis / percentage;

sprintf(percentageText, "%i%% %0.2f / %0.2f seconds (%0.2f remaining)", int(percentage*100.0f), ((float)millis) / 1000.0f, expectedMillis / 1000.0f, (expectedMillis - millis) / 1000.0f);

printf("%s", percentageText);

}

// get a reference to our output sample

TAmplitude &outputSample = samples[sampleIndex.Value()];

// figure out the first reverb index and input index we should process.

TSamples startReverbIndex(0);

TSamples startInputIndex(0);

if (sampleIndex < TSamples(reverbData.size()))

startReverbIndex = TSamples(reverbData.size()) - sampleIndex - TSamples(1);

else

startInputIndex = sampleIndex - TSamples(reverbData.size());

// for each reverb sample

for (TSamples reverbIndex(startReverbIndex), numReverbSamples(reverbData.size()), inputIndex(startInputIndex), numInputSamples(inputData.size());

reverbIndex < numReverbSamples && inputIndex < numInputSamples;

++reverbIndex, ++inputIndex)

{

const TAmplitude &inputSample = inputData[inputIndex.Value()];

const TAmplitude &reverbSample = reverbData[reverbIndex.Value()];

outputSample += inputSample * reverbSample;

}

}

// normalize the reverb to the wetness volume level

NormalizeSamples(samples, c_reverbWetness, TSamples(0));

// apply the dry sound on top, per the wetness settings

for (TSamples inputIndex = TSamples(0), numInputSamples(inputData.size()); inputIndex < numInputSamples; ++inputIndex)

{

const TAmplitude &inputSample = inputData[inputIndex.Value()];

TAmplitude &outputSample = samples[inputIndex.Value()];

outputSample += inputSample * (TAmplitude(1.0f) - c_reverbWetness);

}

// normalize the amplitude of the samples to make sure they are as loud as possible without clipping.

// give 3db of headroom and also put an envelope on the front and back that is 50ms

NormalizeSamples(samples, DBToAmplitude(TDecibels(-3.0f)), MilliSecondsToSamples(sound, 50.0f));

// save as a wave file

WriteWaveFile("out.wav", samples, sound);

return 0;

}

Intuition

I had to think about it for a bit but the intuition for why this works is actually pretty straight forward!

The impulse response represents all the echos that occur over time if you make a single sample of 1.0 amplitude. This is your guide for how all samples in an impulse source (IS) should be transformed, where IS is the sound you want to add reverb to.

So, starting out, you put the entire IR into your output buffer starting at output[0] (the first sample in the output buffer), multiplied by IS[0] (the first sample of the sound you are reverbing).

This leaves you with the proper reverb of the first sample in your IS, and your total output buffer length is the same length as your IR.

We only have one sample reverbed though, so we move to the next…

Next, you put the entire IR into the output buffer again, but this time start at output[1] (the second sample in the output buffer) and those IR values you are writing need to be multiplied by IS[1] (the second sample of the sound you are reverbing).

You now have the proper reverb for the first two samples in your IS, and your total output buffer length is the length of your IR + 1.

Rinse and repeat for all samples in your IS, and at the end, the reverb of all samples in your IS will be accounted for in your output buffer, and the size of your output buffer will be the length of your IR plus the length of your IS, minus one.

Pretty simple and intuitive right?

You are essentially playing the IR in the output buffer length(IS) times, where IS[outputBufferIndex] determines the volume (amplitude) that you need to play the IR at.

Convolution does exactly this, which turns out to be pretty slow due to it being a lot of math to preform.

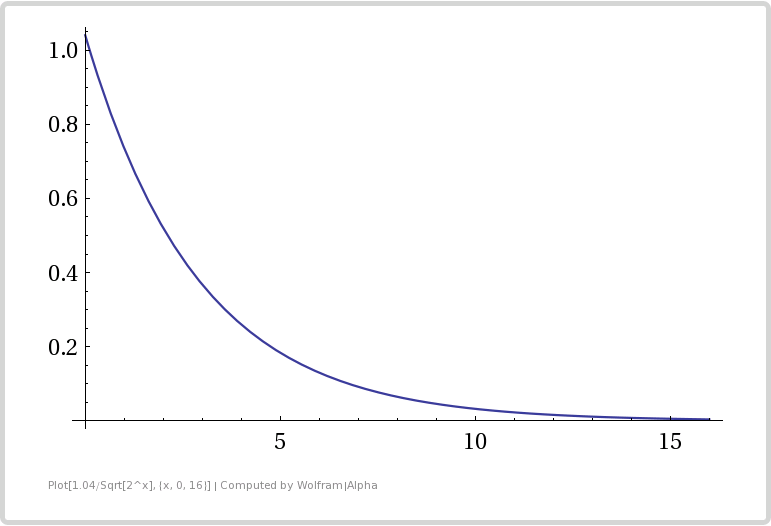

If you have a source file (IS) you are reverbing that is 4 seconds long, running at 44100 samples per second (176,400 samples total), and you have a reverb impulse response (IR) that is 2 seconds long at 44100 samples per second (88,200 samples total), that means that you are going to essentially be mixing the IR into an output buffer 176,400 times.

Each time you play the IR you need to do a multiplication per IR sample (to scale it to the IS[index] amplitude) and an addition to add it to the resulting output buffer.

At 88,200 IR samples with a multiply and add for each sample, and doing that 176,400 times, that means at the end you will need to do 15,558,480,000 (15.5 billion) multiplies and adds.

And remember… that is only for a 4 second sound that is receiving a 2 second reverb… those are pretty small sound files involved! And, that is only a single channel. That would double in stereo, and be even worse in 5.1 or 7.1 surround sound!

More Info

So, this method works, but unfortunately it’s pretty darn slow (it took like 5 minutes for me to convolve the legend quote with the large reverb). It could be made faster by introducing threading and SSE instructions, but there is a better way that gives us an even bigger speed up.

Having audio samples means you are working in the “time domain”. If you take a fourier transform of the audio samples, you’ll have the information in the “frequency domain”. As it turns out, if you do a multiplication in the frequency domain, that is equivalent to doing a convolution in the time domain. Using the fast fourier transform on your input sound and multiplying that by the pre-transformed FFT of the impulse response, and then doing an inverse FFT on the result to get back into the time domain (aka audio samples) is MUCH FASTER than doing actual convolution.

Another problem with convolution reverb is that you need the full sound you are convolving, which makes it basically impossible to use in a live setup. The solution to this is that people convolve windows of data at a time, instead of the whole sound. I think a common window size is 256 samples.

I’ll make a post in the future that addresses both those issues to allow for fast, real time convolution reverb via windowed FFT multiplication.

Also, I said in the last post that this technique is what the pros do. We’ll I should add that this is what they do SOMETIMES. Other times they use DSP algorithms involving comb filters and multi tap delays (and other things) to make fast reverb that sounds pretty decent without needing to do convolution or FFT/IFFT. Check the links section for a link to the details of one such algorithm.

Regarding IR samples (Impulse Response recordings), if you record your own, it should work as is. However, a common thing that people do is remove the loud sound from the impulse response (via deconvolution i think?) to get ONLY the impulse response. It basically makes it so that the IR really is just an IR of that room as if you had a single sample of a “1.0” echoing in the area. Without this step, your reverb convolution will still work, but you’ll get “smearing” of the sound, due to it not being a true 1.0 sample (or in other words, not a true dirac delta).

Luckily there are IR’s available for download online as well. Some are free, some are pay. Check the links section for a few links I found for that (:

Lastly, this post basically teaches you how to do 1 dimensional convolution, showing you an application for it. You can do convolution in higher dimensions as well though, and in fact if you have used photoshop and know about things like gaussian blur, sharpen mask, etc, those guys work by 2d convolution. Bokeh is even apparently convolution, as is a “Minkowski Sum” if you have done work in game physics.

Ill make a graphics related 2d convolution post in the future as well to delve into that stuff a bit deeper.

As one final sound sample, check out this CROSS CORRELATION (not convolution) of SoundBite.wav and ReverbLarge. Cross correlation is the same as convolution except you don’t reverse one of the samples. So, in effect, it’s like I convolved SoundBite.wav with ReverbLarge.wav played backwards.

More info on cross correlation coming soon. It has uses in audio, but more often it’s used for finding time delays of sounds compared to other sounds, recognizing patterns in sound data even with the presence of noise / distortion, versus having actual audible uses (although there are some of those too!).

Links

A good read explaining 1d and 2d convolution

More info on convolution reverb

Even more info on convolution reverb

Explains discrete convolution and has an interactive demo

Khan Academy: Continuous Convolution

Info on faked reverb

More info on faked reverb

A reverb blog!

Some free IRs you can download