A web demo implements the formulas from this post at http://demofox.org/gauss.html

There is also this utility which has some real sophisticated features: https://github.com/manuelbua/blur-ninja

In this post we are going to take the concepts we went over in the last post (Box Blur) and apply them to Gaussian blurring.

At a high level, Gaussian blurring works just like box blurring in that there is a weight per pixel and that for each pixel, you apply the weights to that pixel and it’s neighbors to come up with the final value for the blurred pixel.

With true Gaussian blurring however, the function that defines the weights for each pixel technically never reaches zero, but gets smaller and smaller over distance. In theory this makes a Gaussian kernel infinitely large. In practice though, you can choose a cut off point and call it good enough.

The parameters to a Gaussian blur are:

- Sigma (

) – This defines how much blur there is. A larger number is a higher amount of blur.

- Radius – The size of the kernel in pixels. The appropriate pixel size can be calculated for a specific sigma, but more information on that lower down.

Just like a box blur, a Gaussian blur is separable which means that you can either apply a 2d convolution kernel, or you can apply a 1d convolution kernel on each axis. Doing a single 2d convolution means more calculations, but you only need one buffer to put the results into. Doing two 1d convolutions (one on each axis), ends up being fewer calculations, but requires two buffers to put the results into (one intermediate buffer to hold the first axis results).

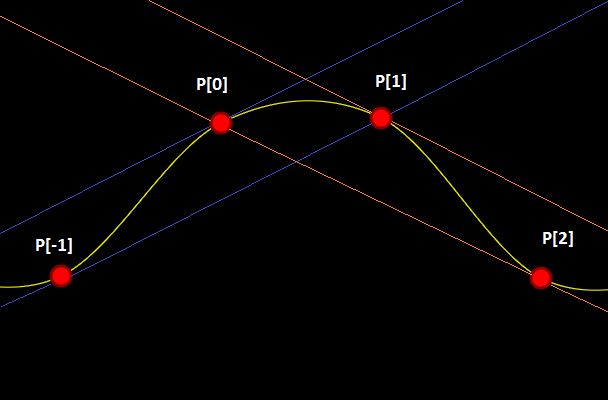

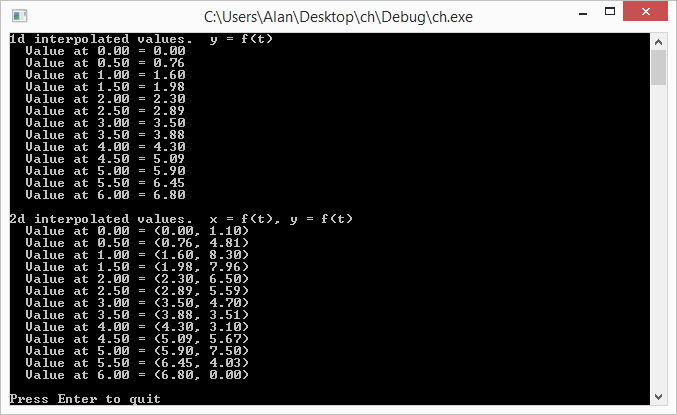

Here is a 3 pixel 1d Gaussian Kernel for a sigma of 1.0:

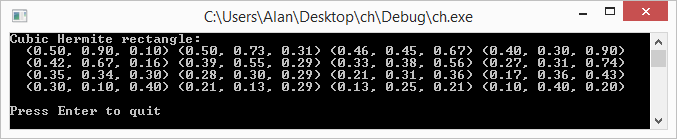

Below is a 3×3 pixel 2d Gaussian Kernel also with a sigma of 1.0. Note that this can be calculated as an outer product (tensor product) of 1d kernels!

An interesting property of Gaussian blurs is that you can apply multiple smaller blurs and it will come up with the result as if you did a larger Blur. Unfortunately it’s more calculations doing multiple smaller blurs so is not usually worth while.

If you apply multiple blurs, the equivalent blur is the square root of the sum of the squares of the blur. Taking wikipedia’s example, if you applied a blur with radius 6 and a blur with a radius of 8, you’d end up with the equivelant of a radius 10 blur. This is because .

Calculating The Kernel

There are a couple ways to calculate a Gaussian kernel.

Believe it or not, Pascal’s triangle approaches the Gaussian bell curve as the row number reaches infinity. If you remember, Pascal’s triangle also represents the numbers that each term is calculated by after expanding binomials . So technically, you could use a row from Pascal’s triangle as a 1d kernel and normalize the result, but it isn’t the most accurate.

A better way is to use the Gaussian function which is this:

Where the sigma is your blur amount and x ranges across your values from the negative to the positive. For instance if your kernel was 5 values, it would range from -2 to +2.

An even better way would be to integrate the Gaussian function instead of just taking point samples. You can read about it in the link at the bottom “Gaussian Kernel Calculator”, but it’s also what we do in the example code.

Whatever way you do it, make sure and normalize the result so that the weights add up to 1. This makes sure that your blurring doesn’t make the image get brighter (greater than 1) or dimmer (less than 1).

Calculating The Kernel Size

Given a sigma value, you can calculate the size of the kernel you need by using this formula:

That formula makes a Kernel large enough such that it cuts off when the value in the kernel is less than 0.5%. You can adjust the number in there to higher or lower depending on your desires for speed versus quality.

Examples

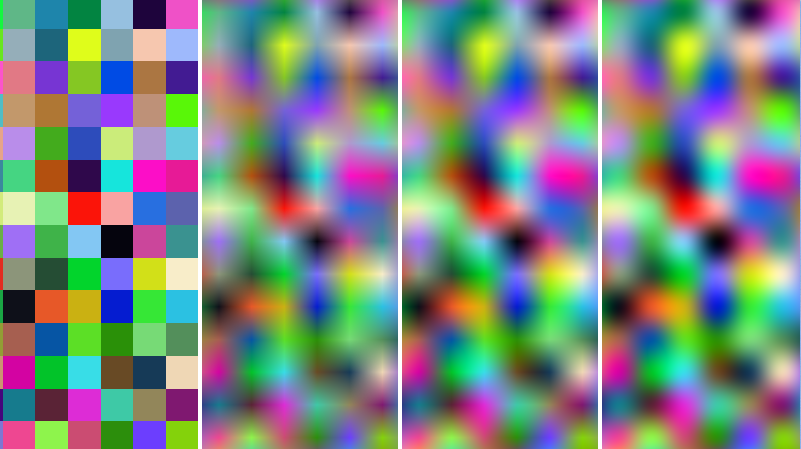

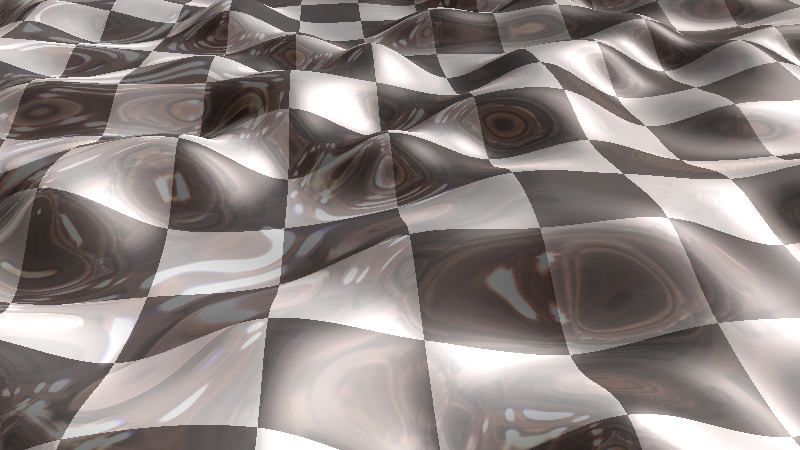

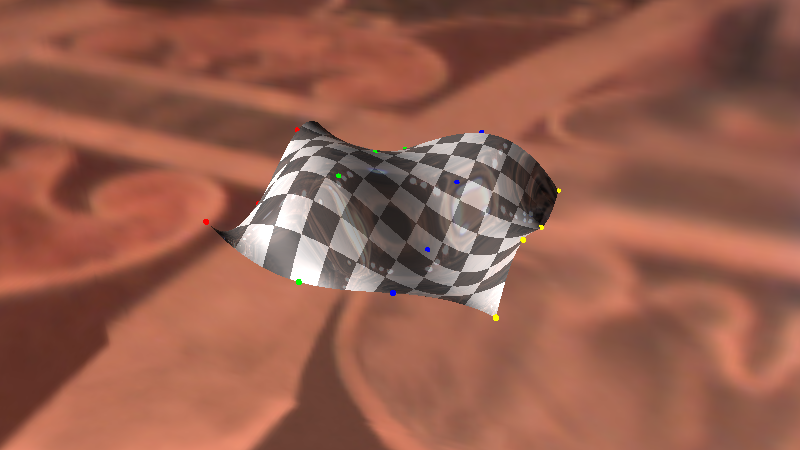

Once again, here is the unaltered image we are working with:

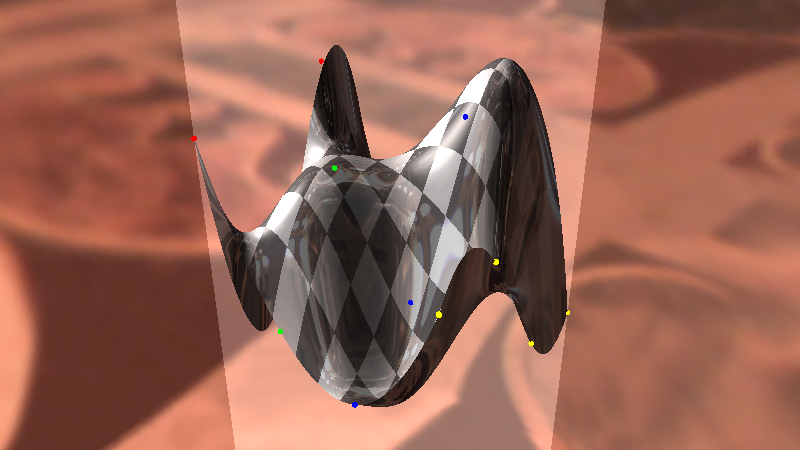

Here is the image blurred with a sigma of 3,3 (3 on the x axis and 3 on the y axis):

Here is the image blurred with a sigma of 20,3:

Here is the image blurred with a sigma of 50,50:

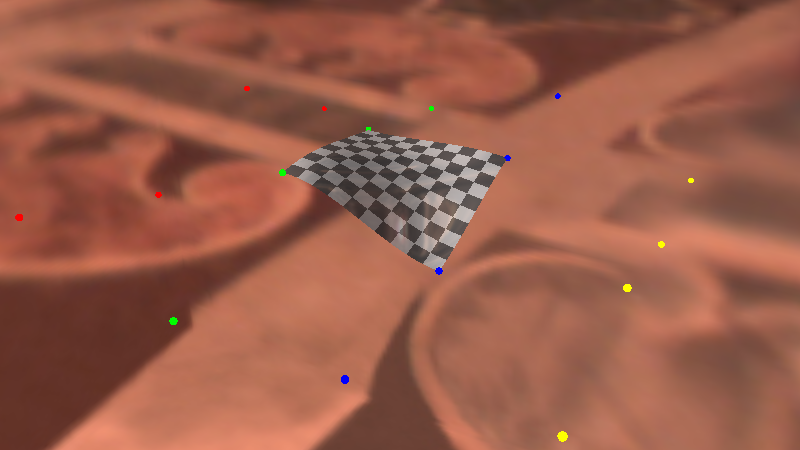

Shadertoy

Here’s a shadertoy implementing Gaussian Blur: Shadertoy:DF Gaussian Blur

Code

Here’s the source code I used to blur the examples above:

#define _CRT_SECURE_NO_WARNINGS

#include <stdio.h>

#include <stdint.h>

#include <array>

#include <vector>

#include <functional>

#include <windows.h> // for bitmap headers. Sorry non windows people!

typedef uint8_t uint8;

const float c_pi = 3.14159265359f;

struct SImageData

{

SImageData()

: m_width(0)

, m_height(0)

{ }

long m_width;

long m_height;

long m_pitch;

std::vector<uint8> m_pixels;

};

void WaitForEnter ()

{

printf("Press Enter to quit");

fflush(stdin);

getchar();

}

bool LoadImage (const char *fileName, SImageData& imageData)

{

// open the file if we can

FILE *file;

file = fopen(fileName, "rb");

if (!file)

return false;

// read the headers if we can

BITMAPFILEHEADER header;

BITMAPINFOHEADER infoHeader;

if (fread(&header, sizeof(header), 1, file) != 1 ||

fread(&infoHeader, sizeof(infoHeader), 1, file) != 1 ||

header.bfType != 0x4D42 || infoHeader.biBitCount != 24)

{

fclose(file);

return false;

}

// read in our pixel data if we can. Note that it's in BGR order, and width is padded to the next power of 4

imageData.m_pixels.resize(infoHeader.biSizeImage);

fseek(file, header.bfOffBits, SEEK_SET);

if (fread(&imageData.m_pixels[0], imageData.m_pixels.size(), 1, file) != 1)

{

fclose(file);

return false;

}

imageData.m_width = infoHeader.biWidth;

imageData.m_height = infoHeader.biHeight;

imageData.m_pitch = imageData.m_width*3;

if (imageData.m_pitch & 3)

{

imageData.m_pitch &= ~3;

imageData.m_pitch += 4;

}

fclose(file);

return true;

}

bool SaveImage (const char *fileName, const SImageData &image)

{

// open the file if we can

FILE *file;

file = fopen(fileName, "wb");

if (!file)

return false;

// make the header info

BITMAPFILEHEADER header;

BITMAPINFOHEADER infoHeader;

header.bfType = 0x4D42;

header.bfReserved1 = 0;

header.bfReserved2 = 0;

header.bfOffBits = 54;

infoHeader.biSize = 40;

infoHeader.biWidth = image.m_width;

infoHeader.biHeight = image.m_height;

infoHeader.biPlanes = 1;

infoHeader.biBitCount = 24;

infoHeader.biCompression = 0;

infoHeader.biSizeImage = image.m_pixels.size();

infoHeader.biXPelsPerMeter = 0;

infoHeader.biYPelsPerMeter = 0;

infoHeader.biClrUsed = 0;

infoHeader.biClrImportant = 0;

header.bfSize = infoHeader.biSizeImage + header.bfOffBits;

// write the data and close the file

fwrite(&header, sizeof(header), 1, file);

fwrite(&infoHeader, sizeof(infoHeader), 1, file);

fwrite(&image.m_pixels[0], infoHeader.biSizeImage, 1, file);

fclose(file);

return true;

}

int PixelsNeededForSigma (float sigma)

{

// returns the number of pixels needed to represent a gaussian kernal that has values

// down to the threshold amount. A gaussian function technically has values everywhere

// on the image, but the threshold lets us cut it off where the pixels contribute to

// only small amounts that aren't as noticeable.

const float c_threshold = 0.005f; // 0.5%

return int(floor(1.0f + 2.0f * sqrtf(-2.0f * sigma * sigma * log(c_threshold)))) + 1;

}

float Gaussian (float sigma, float x)

{

return expf(-(x*x) / (2.0f * sigma*sigma));

}

float GaussianSimpsonIntegration (float sigma, float a, float b)

{

return

((b - a) / 6.0f) *

(Gaussian(sigma, a) + 4.0f * Gaussian(sigma, (a + b) / 2.0f) + Gaussian(sigma, b));

}

std::vector<float> GaussianKernelIntegrals (float sigma, int taps)

{

std::vector<float> ret;

float total = 0.0f;

for (int i = 0; i < taps; ++i)

{

float x = float(i) - float(taps / 2);

float value = GaussianSimpsonIntegration(sigma, x - 0.5f, x + 0.5f);

ret.push_back(value);

total += value;

}

// normalize it

for (unsigned int i = 0; i < ret.size(); ++i)

{

ret[i] /= total;

}

return ret;

}

const uint8* GetPixelOrBlack (const SImageData& image, int x, int y)

{

static const uint8 black[3] = { 0, 0, 0 };

if (x < 0 || x >= image.m_width ||

y < 0 || y >= image.m_height)

{

return black;

}

return &image.m_pixels[(y * image.m_pitch) + x * 3];

}

void BlurImage (const SImageData& srcImage, SImageData &destImage, float xblursigma, float yblursigma, unsigned int xblursize, unsigned int yblursize)

{

// allocate space for copying the image for destImage and tmpImage

destImage.m_width = srcImage.m_width;

destImage.m_height = srcImage.m_height;

destImage.m_pitch = srcImage.m_pitch;

destImage.m_pixels.resize(destImage.m_height * destImage.m_pitch);

SImageData tmpImage;

tmpImage.m_width = srcImage.m_width;

tmpImage.m_height = srcImage.m_height;

tmpImage.m_pitch = srcImage.m_pitch;

tmpImage.m_pixels.resize(tmpImage.m_height * tmpImage.m_pitch);

// horizontal blur from srcImage into tmpImage

{

auto row = GaussianKernelIntegrals(xblursigma, xblursize);

int startOffset = -1 * int(row.size() / 2);

for (int y = 0; y < tmpImage.m_height; ++y)

{

for (int x = 0; x < tmpImage.m_width; ++x)

{

std::array<float, 3> blurredPixel = { 0.0f, 0.0f, 0.0f };

for (unsigned int i = 0; i < row.size(); ++i)

{

const uint8 *pixel = GetPixelOrBlack(srcImage, x + startOffset + i, y);

blurredPixel[0] += float(pixel[0]) * row[i];

blurredPixel[1] += float(pixel[1]) * row[i];

blurredPixel[2] += float(pixel[2]) * row[i];

}

uint8 *destPixel = &tmpImage.m_pixels[y * tmpImage.m_pitch + x * 3];

destPixel[0] = uint8(blurredPixel[0]);

destPixel[1] = uint8(blurredPixel[1]);

destPixel[2] = uint8(blurredPixel[2]);

}

}

}

// vertical blur from tmpImage into destImage

{

auto row = GaussianKernelIntegrals(yblursigma, yblursize);

int startOffset = -1 * int(row.size() / 2);

for (int y = 0; y < destImage.m_height; ++y)

{

for (int x = 0; x < destImage.m_width; ++x)

{

std::array<float, 3> blurredPixel = { 0.0f, 0.0f, 0.0f };

for (unsigned int i = 0; i < row.size(); ++i)

{

const uint8 *pixel = GetPixelOrBlack(tmpImage, x, y + startOffset + i);

blurredPixel[0] += float(pixel[0]) * row[i];

blurredPixel[1] += float(pixel[1]) * row[i];

blurredPixel[2] += float(pixel[2]) * row[i];

}

uint8 *destPixel = &destImage.m_pixels[y * destImage.m_pitch + x * 3];

destPixel[0] = uint8(blurredPixel[0]);

destPixel[1] = uint8(blurredPixel[1]);

destPixel[2] = uint8(blurredPixel[2]);

}

}

}

}

int main (int argc, char **argv)

{

float xblursigma, yblursigma;

bool showUsage = argc < 5 ||

(sscanf(argv[3], "%f", &xblursigma) != 1) ||

(sscanf(argv[4], "%f", &yblursigma) != 1);

char *srcFileName = argv[1];

char *destFileName = argv[2];

if (showUsage)

{

printf("Usage: <source> <dest> <xblur> <yblur>nBlur values are sigmann");

WaitForEnter();

return 1;

}

// calculate pixel sizes, and make sure they are odd

int xblursize = PixelsNeededForSigma(xblursigma) | 1;

int yblursize = PixelsNeededForSigma(yblursigma) | 1;

printf("Attempting to blur a 24 bit image.n");

printf(" Source=%sn Dest=%sn blur=[%0.1f, %0.1f] px=[%d,%d]nn", srcFileName, destFileName, xblursigma, yblursigma, xblursize, yblursize);

SImageData srcImage;

if (LoadImage(srcFileName, srcImage))

{

printf("%s loadedn", srcFileName);

SImageData destImage;

BlurImage(srcImage, destImage, xblursigma, yblursigma, xblursize, yblursize);

if (SaveImage(destFileName, destImage))

printf("Blurred image saved as %sn", destFileName);

else

{

printf("Could not save blurred image as %sn", destFileName);

WaitForEnter();

return 1;

}

}

else

{

printf("could not read 24 bit bmp file %snn", srcFileName);

WaitForEnter();

return 1;

}

return 0;

}Links

Here is a really great explanation of the Gaussian blur.

Gaussian Blur – Image processing for scientists and engineers, Part 4

I highly recommend reading the 6 part series about image processing (DSP) from the beginning because it’s really informative and very easy to read!

Images are data – Image processing for scientists and engineers, Part 1

The Gaussian Kernel

Gaussian Kernel Calculator

DSP Stack Exchange: Gaussian Blur – standard deviation, radius and kernel size

Wikipedia: Gaussian blur

If you want to take this from theory / hobby level up to pro level, give this link a read from intel:

Intel: An investigation of fast real-time GPU-based image blur algorithms