Real time raytracing is seemingly finally here. We have a real time raytracing API in directX, another API in vulkan, hardware support in nvidia cards, and this is only the beginning of this new phase of graphics.

Having hardware and API support for raytracing means we can use that to help with the usual raytraced graphics things – reflection, refraction, shadows and more – but it is also new hardware / API to abuse.

For instance, Inigo Quilez talks about how ray / triangle intersection could be used to do 3×3 linear equation solving: http://www.iquilezles.org/blog/?p=4666

In a similar style of hardware/API abuse (but not related to raytracing), I have shown how to make the linear texture interpolator calculate points on curves and surfaces when storing the control points in texels, and also showed how it can evaluate generic polynomials: https://blog.demofox.org/2016/02/22/gpu-texture-sampler-bezier-curve-evaluation/

In the not too distant future, I think it will be common to use raytracing for data lookups in real time graphics, much like we use textures currently. In fact, from a couple conversations I’ve had with folks on twitter, it seems as though some people are already doing this.

As strange as it sounds, raytracing has a significant advantage over texture lookups. A texture lookup is limited to data stored in a regular grid in pixels. Raytracing data lookups get their data from a mesh, with data stored in vertices (in a vertex buffer).

Storing data in the vertices of a mesh means that you can store data points wherever you want. If you need more detail in one area and less in another, you can put more vertices in the higher detail area, and fewer vertices in the low detail area. You could also store data in a blue noise sampling pattern to help fight aliasing problems you might have with the regular grid of a texture. Furthermore, you could actually have holes in your data for invalid data regions, by having holes in the mesh.

Essentially, a mesh is just a generalization of a texture. You are no longer locked to a grid!

How the data lookups are actually done is not too complex either.

For the 2d case where you have a function f(x,y), you would make a triangle mesh where the (x,y) position of each vertex was the location of a data point, and you would make the z value some constant such as 0.5.

To look up the value of the data for some (x,y) input, you could make a ray that started at (x,y,0) and went in direction (0,0,1). When you did your raytrace, you’d get as a result the triangle index and the barycentric coordinates of that triangle. From there you could look up the data from the 3 vertices of the triangle and use the barycentric coordinates to interpolate between the values. The interpolated value would be your result.

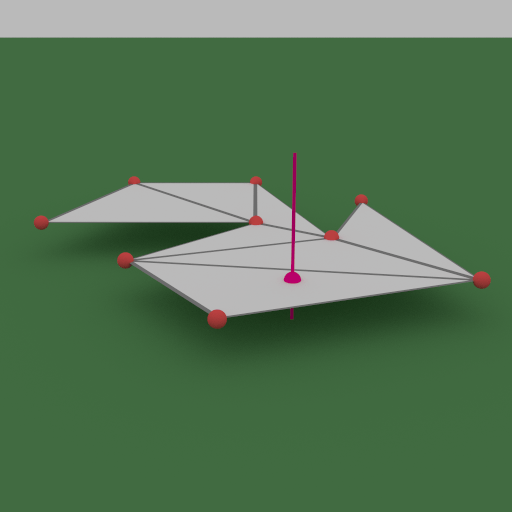

You can see how this process goes in the image below. The purple dot is the query location.

Just as there are volume textures to store 3d data in 3d textures, raytraced data lookups can also be extended to 3d.

For the 3d case where you have a function f(x,y,z), you would make a tetrahedral mesh where the (x,y,z) position of each vertex was the location of a data point.

To look up the value of the data for some (x,y,z) input, you need to be able to find what tetrahedron the point is in. To do this, you just shoot a ray starting at that (x,y,z) position in any arbitrary direction. That ray will hit one triangle in the tetrahedron. You then shoot a ray from the (x,y,z) position in the opposite direction to get a different triangle in the tetrahedron.

From these two triangles, you’ll have 6 vertices but only 4 will be unique. Those 4 vertices are the vertices of the tetrahedron. You can read the data from the vertices, calculate the barycentric coordinates of the point inside the tetrahedron, and then use those to interpolate the vertex data to get the result.

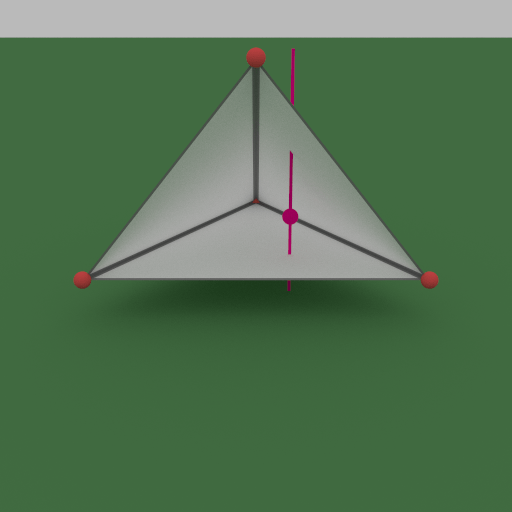

You can see how this process goes in the image below. The purple dot is the query location again.

The most immediate usage case I can think of for this technique would be for diffuse light probe grids. Whether you had a 2d or 3d light probe grid, you’d be able to make probes as dense as needed, or as sparse as you can get away with, in different sections of the geometry. You could also make holes in the mesh to make sure data didn’t interpolate through walls, leading to light leaking. You would use the techniques described above to interpolate the simplex data and get the result.

As this data is likely going to be relatively simple geometry compared to something like an actual game asset, it seems like it ought to be able to be pretty performant too.

Nathan Reed shared a really good idea on twitter, for doing the 3d lookup with only a single raytrace. The idea is that when you knew what triangle you hit, you could look in a table to get the fourth vertex based on whether you hit the triangle from the front or the back. One way to do this would be to have a buffer that had two vertex indices per triangle. The first index would be if you hit from positive, the second would be if you hit from negative.

That way, the index you’d look at would be [triangleIndex*2 + hitBackSide ? 1 : 0]. That data lookup ought to be a lot cheaper than a second raytrace!

Thread:

Can using raytracing to do data lookups extend to 4d and beyond? Probably, but I’m not sure how. Do you know how, or have any interesting usage cases? Share if so, it’d be interesting to hear 🙂

PS – Apparently some folks are using raytracing for GPU physics. I haven’t heard any details of how other people are doing it, but I am looking forward to getting a chance to try it myself. I’m thinking Verlet physics of particles with constraints. That amounts to only needing the current and previous particle positions to get an implicit velocity, and then doing small incremental constraint solving steps to try and make things keep their shape etc. The end result is something like screen space particles / screen space physics, except it would have knowledge of the entire scene, whereas screenspace techniques only have knowledge of the gbuffer. I’ve heard that short ray trace queries run a lot faster (20x?) by not needing to traverse the acceleration structure (BVH) as widely. With luck I’ll give it a try and write a post up about it before too long.

Pingback: Bezier Triangles « The blog at the bottom of the sea