Posts in this series:

- Basic Camera, Diffuse, Emissive

- Image Improvement and Glossy Reflections

- Fresnel, Rough Refraction & Absorption, Orbit Camera

Below is a screenshot of the shadertoy that goes with this post. Click to view full size. That shadertoy can be found at:

https://www.shadertoy.com/view/ttfyzN

On the menu today is:

- Fresnel – This makes objects shinier at grazing angles which increases realism.

- Rough Refraction & Absorption – This makes it so we can have transparent objects, for various definitions of the term transparent.

- Orbit Camera – This lets you control the camera with the mouse to be able to see the scene from different angles

Fresnel

First up is the fresnel effect. I said I wasn’t going to do it in this series, but it really adds a lot to the end result, and we are going to need it (and related parameters) for glossy reflections anyways.

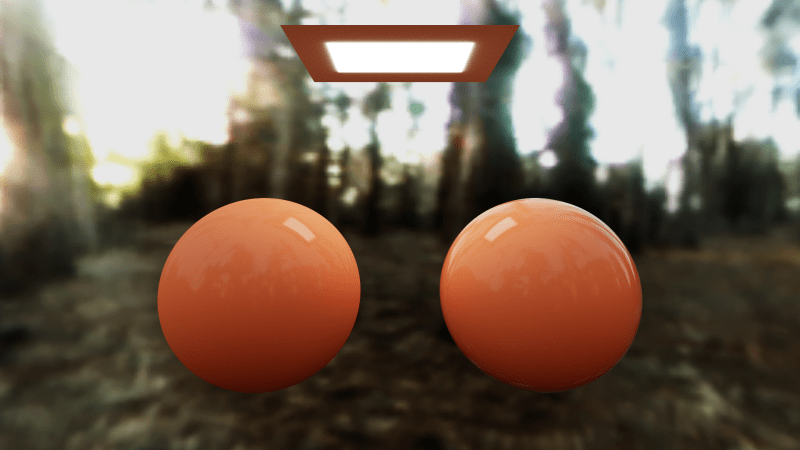

Fresnel makes objects shinier when you view them at grazing angles and helps objects look more realistic. In the image below, the left sphere does not have fresnel, but the right sphere does. Fresnel adds a better sense of depth and shape.

The fresnel function we are going to use is this, so put this in common or buffer A above the raytracing shading code.

float FresnelReflectAmount(float n1, float n2, vec3 normal, vec3 incident, float f0, float f90)

{

// Schlick aproximation

float r0 = (n1-n2) / (n1+n2);

r0 *= r0;

float cosX = -dot(normal, incident);

if (n1 > n2)

{

float n = n1/n2;

float sinT2 = n*n*(1.0-cosX*cosX);

// Total internal reflection

if (sinT2 > 1.0)

return f90;

cosX = sqrt(1.0-sinT2);

}

float x = 1.0-cosX;

float ret = r0+(1.0-r0)*x*x*x*x*x;

// adjust reflect multiplier for object reflectivity

return mix(f0, f90, ret);

}

n1 is the “index of refraction” or “IOR” of the material the ray started in (air, and we are going to use 1.0). n2 is the “index of refraction” of the material of the object being hit. normal is the normal of the surface where the ray hit. incident is the ray direction when it hit the object. f0 is the minimum reflection of the object (when the ray and normal are 0 degrees apart), f90 is the maximum reflection of the object (when the ray and normal are 90 degrees apart).

For the index of refraction of objects, i used a value of “1.0” in the image with the 2 orange spheres above. for f90, the maximum reflection of the objects, we are going to just use “1.0” to make objects fully reflective at the edge.

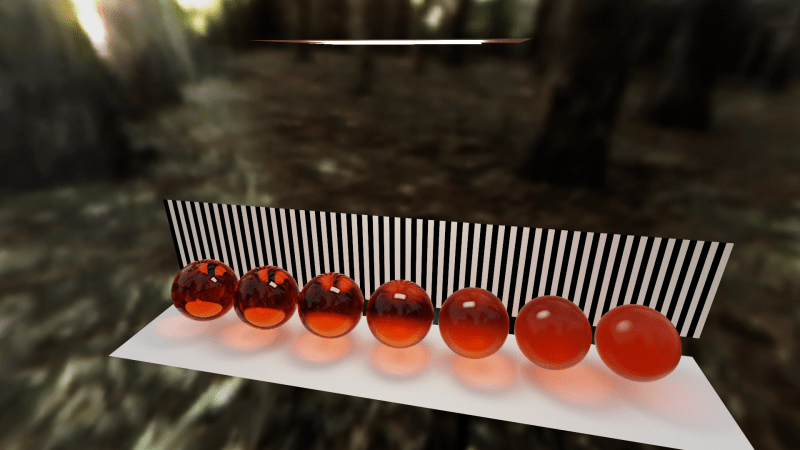

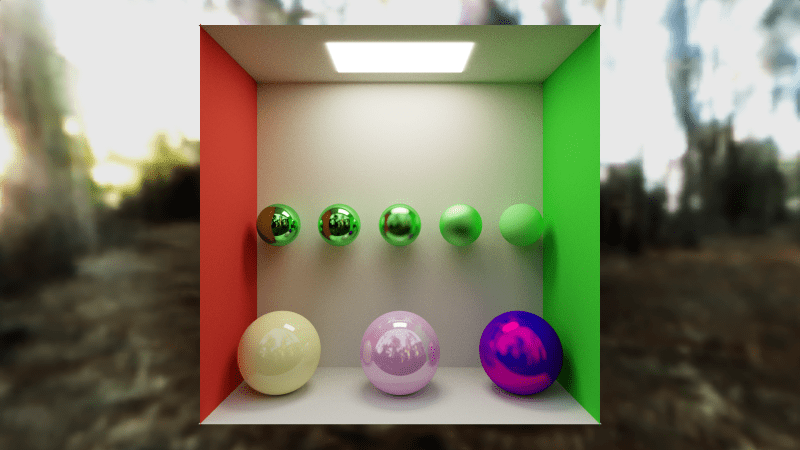

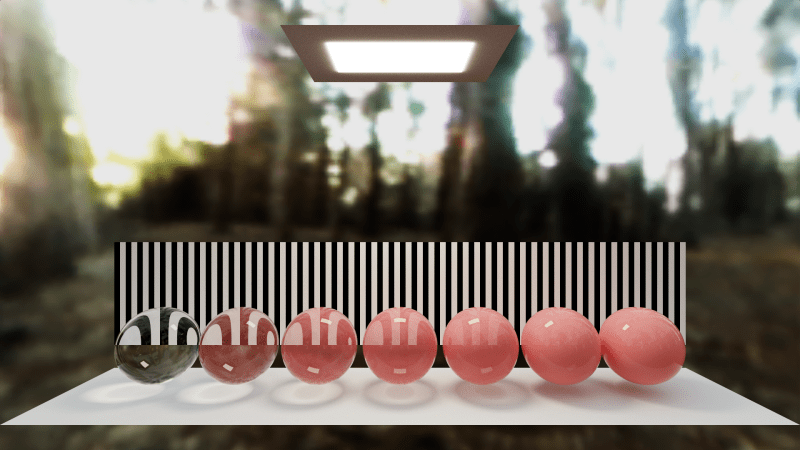

Below are spheres with a minimum reflection (reflection chance!) of 0.02, and the IOR go from 1 (on the left) to 2 (on the right). To me, the one on the right looks like a pearl. (this is the SCENE define value 2 scene in the shadertoy)

To apply fresnel to our shader, find this code:

// calculate whether we are going to do a diffuse or specular reflection ray float doSpecular = (RandomFloat01(rngState) < hitInfo.material.percentSpecular) ? 1.0f : 0.0f;

Change it to this:

// apply fresnel

float specularChance = hitInfo.material.percentSpecular;

if (specularChance > 0.0f)

{

specularChance = FresnelReflectAmount(

1.0,

hitInfo.material.IOR,

rayDir, hitInfo.normal, hitInfo.material.percentSpecular, 1.0f);

}

// calculate whether we are going to do a diffuse or specular reflection ray

float doSpecular = (RandomFloat01(rngState) < specularChance) ? 1.0f : 0.0f;

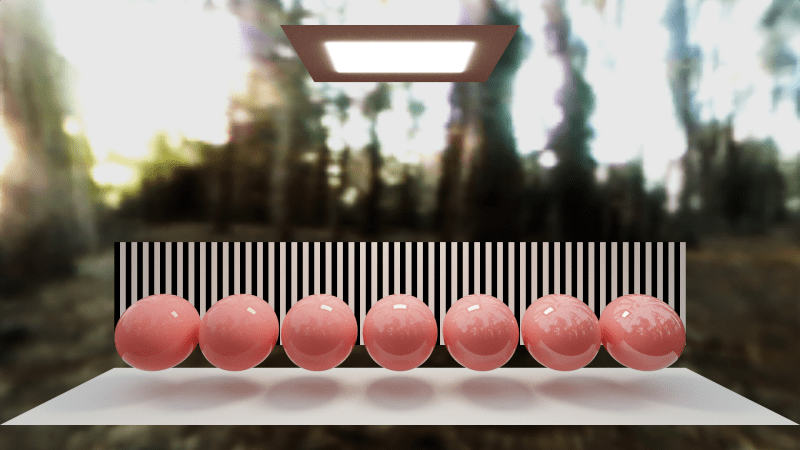

Besides that, a float for "IOR" needs to be added to SMaterialInfo too. Here is the scene from the end of last chapter, using fresnel and with everything using an IOR of 1.0.

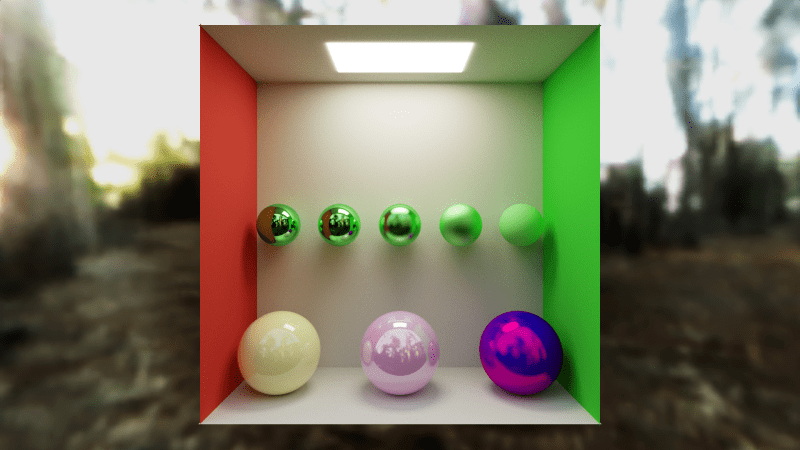

And here is the scene from last chapter without fresnel. The difference is pretty subtle so you might want to open each image in a browser tab to flip back and forth. The biggest difference is on the edges of the yellow and pink sphere. Fresnel makes the biggest difference for objects that are a little bit shiny. Objects that are very shiny or not at all shiny won't have as noticeable fresnel effects.

Better Diffuse / Specular Selection

In the last post we used a random number tested against a percent chance for specular to choose whether a reflected ray was going to be a diffuse ray or a specular ray.

When doing that, we should have also divided the throughput by the probability of the ray actually chosen (to not make one count more than the other in the final average) but we didn’t do that. This is a “mathy detail” but easy enough to put in casually, so let’s do the right thing.

Find the place where the doSpecular float is set based on the random roll. Put this underneath that:

// get the probability for choosing the ray type we chose

float rayProbability = (doSpecular == 1.0f) ? specularChance : 1.0f - specularChance;

// avoid numerical issues causing a divide by zero, or nearly so (more important later, when we add refraction)

rayProbability = max(rayProbability, 0.001f);

Then put this right before doing the russian roulette:

// since we chose randomly between diffuse and specular, // we need to account for the times we didn't do one or the other. throughput /= rayProbability;

The biggest difference from the previous image is that the pink and yellow spheres brighten up a bit, and so do their reflections, of course!

Rough Refraction & Absorption

Time for the fun! We’ll talk about the features and show the results in this section, then show how to get them into the path tracer in the next section.

Thinking about the last post for a second… in simple rendering, and old style raytracers, reflection works by using the reflect() formula/function to find the perfectly sharp mirror reflection angle for a ray hitting a surface, and traces that ray. In the last post we showed how to inject some randomness into that reflected ray, by using a material roughness value to lerp between the perfectly reflected ray and a random ray over the normal oriented hemisphere.

For refraction we are going to do much the same thing.

For simple rendering and old style raytracers, there is a refract() formula/function that find a perfectly sharp refraction angle for a ray hitting a surface, where that surface has a specific IOR (Index of refraction. Same parameter as used for fresnel). The only difference is that since refracted rays go THROUGH an object instead of reflecting off an object, the random hemisphere is going to be oriented with the negative normal of the surface.

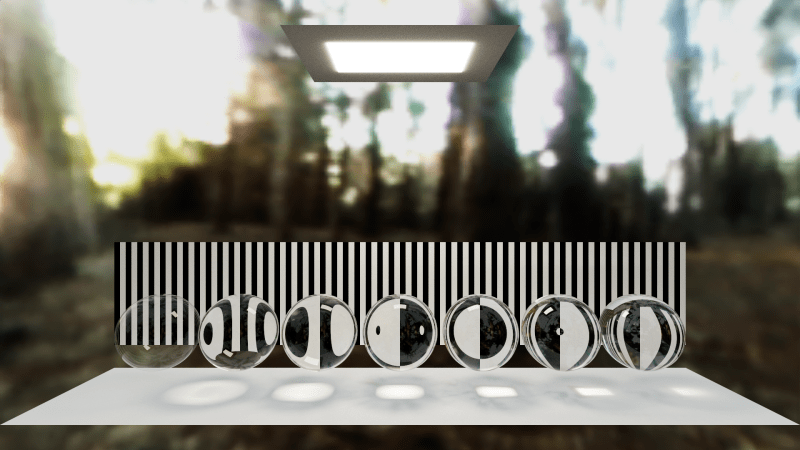

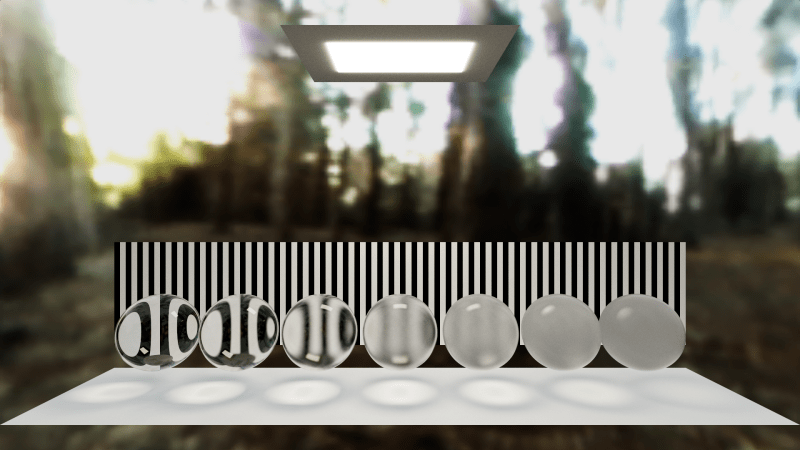

Below is an image of refractive spheres going from IOR 1 on the left, to 1.5 on the right. Notice how the background gets distorted as the IOR increases, and also notice how the light under the spheres gets focused. Those are “caustics” and get way more interesting with other shaped meshes. (this is SCENE define value 1 in the shadertoy)

You might notice some dark streaks on the ground under the spheres in the middle. When i first saw these, i thought it was a bug, but through experimentation found out that they are actually projections of the images in the sky (the top of the skybox).

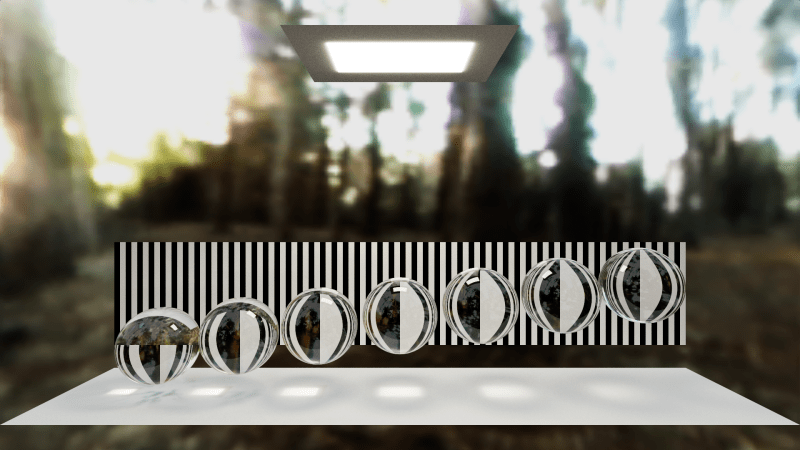

To further demonstrate this, here’s a scene where the spheres are all the same IOR but they are different distances from the floor, which focuses/defocuses the image projected through the sphere, as well as the light above the spheres. (SCENE define value 4 in the shadertoy)

Changing the skybox, it looks like this. Notice the projection on the ground at the left has that circle shape?

Looking up into at what is above the spheres you can see that circle that was projected onto the ground. This is one of the coolest things about path tracing… you get a lot of techniques “for free” that are just emergent features of the math. It’s a lot different than approaching graphics in rasterization / non path traced rendering, where every feature you want, you basically have to make explicit code for to approximate.

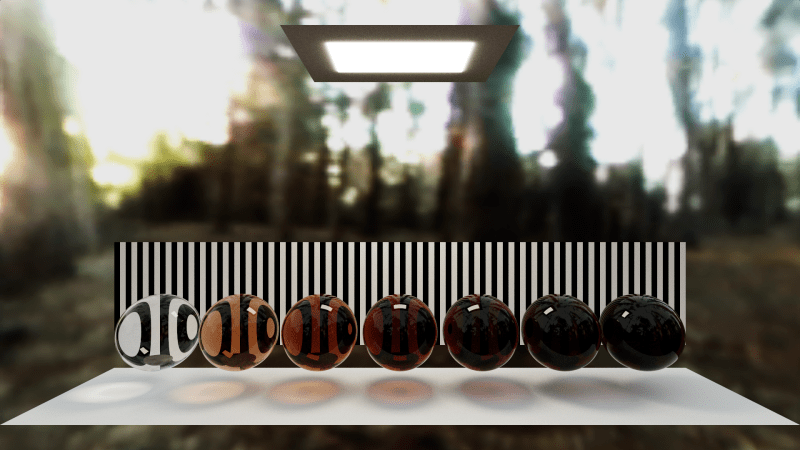

Going back to roughness, here are some refractive spheres using an IOR of 1.1, but varying in roughness from 0 on the left, to 0.5 on the right. Notice that the spheres themselves get obviously more rough, but also their shadow / caustics change with roughness too. (SCENE define value 6 in the shadertoy)

It’s important to notice that in these, it’s just the SURFACE of the sphere that has any roughness to it. If you were able to take one of these balls and crack it in half, the inside would be completely smooth and transparent. In a future post (next, i think!) we’ll talk about how to make some roughness inside of objects, which is also how you render smoke, fog, clouds, and also things like skin, milk, and wax.

Another fun feature you can add to transparent objects is “absorption” which means that light is absorbed over distance as it travels through the object.

We’re going to use Beer’s law to get a multiplier for light that travels through the object, to make that light decrease. The formula for that is just this:

For that, we can use a different absorption value per color channel so that different colors absorb quicker or slower as the light goes through the object.

Here are some spheres that have progressively more absorption. A percentage multiplier goes from 0 on the left to 1 on the right and is multiplied by (1.0, 2.0, 3.0) to get the absorption value. Notice how the spheres change but so do the caustics / shadows. This is SCENE define value 3 in the shadertoy.

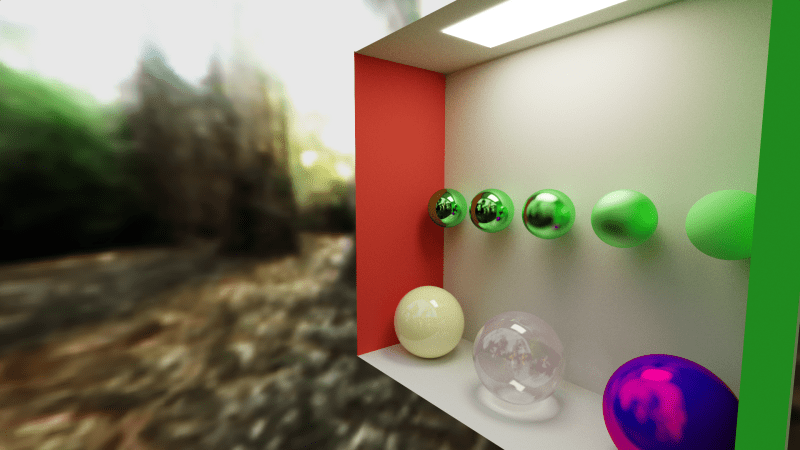

You can combine roughness and absorption to make some interesting things. Here is the same spheres but with some absorption (SCENE define value 0).

Here’s the same scene from a different view, which shows how rich the shading for these objects are.

Implementing Rough Refraction & Absorption

Let’s implement this stuff!

The first thing to do is to add more fields to our SMaterialInfo struct.

We should have something roughly like this right now:

struct SMaterialInfo

{

vec3 albedo; // the color used for diffuse lighting

vec3 emissive; // how much the surface glows

float percentSpecular; // percentage chance of doing specular instead of diffuse lighting

float roughness; // how rough the specular reflections are

vec3 specularColor; // the color tint of specular reflections

float IOR; // index of refraction. used by fresnel and refraction.

};

Change it to this:

struct SMaterialInfo

{

// Note: diffuse chance is 1.0f - (specularChance+refractionChance)

vec3 albedo; // the color used for diffuse lighting

vec3 emissive; // how much the surface glows

float specularChance; // percentage chance of doing a specular reflection

float specularRoughness; // how rough the specular reflections are

vec3 specularColor; // the color tint of specular reflections

float IOR; // index of refraction. used by fresnel and refraction.

float refractionChance; // percent chance of doing a refractive transmission

float refractionRoughness; // how rough the refractive transmissions are

vec3 refractionColor; // absorption for beer's law

};

You might notice that there is both a specular roughness as well as a refraction roughness. That could be combined into a single “surface roughness” which was used by both if desired. You lose the ability to make reflections have different roughness than refraction, but that wouldn’t be a huge loss.

Also, there is a specular chance, and refraction chance, with an implied diffuse chance making those sum to 1.0.

Instead of doing chances that way, another thing to try could be to make the diffuse chance be the sum of the albedo components, the specular chance be the sum of the specular color components, and the refraction chance be the sum of refraction color components. You could then divide them all by the sum of all 3 chances, so that they summed up to 1.0.

This would let you get rid of those percentages, and should be pretty good choices. The only weird thing is that the meaning of refraction color is sort of reversed compared to the others. The others are how much light to let through, but refraction color is how much light to block. You might have better luck subtracting refraction color from (1,1,1) and using that to make a refraction chance.

Since the light from rays is divided by the probability of choosing that type of ray (diffuse, specular, or refractive), it doesn’t really matter how you choose the probabilities for choosing the ray type, but when the probabilities better match the contribution to the pixel’s final color (more contribution = higher percentage), you are going to get a better (more converged) image more quickly. This is straight up importance sampling, and i’ll stop there so we can keep this casual 🙂

The next change is to address a problem we are likely to hit. Now that our material has quite a few components, it would be real easy to forget to set one when setting material properties. If you ever forget to set one, you are either going to have uninitialized values, or values coming from a previous ray hit that is no longer valid. That is going to make some real hard to track bugs, along with the possibility of undefined behavior due to uninitialized values (AFAIK). So, I made a function that initializes a material roughly to zero, but mainly just to sane values. The idea is that you call this function to init a material, and then set the specific values you care about.

SMaterialInfo GetZeroedMaterial()

{

SMaterialInfo ret;

ret.albedo = vec3(0.0f, 0.0f, 0.0f);

ret.emissive = vec3(0.0f, 0.0f, 0.0f);

ret.specularChance = 0.0f;

ret.specularRoughness = 0.0f;

ret.specularColor = vec3(0.0f, 0.0f, 0.0f);

ret.IOR = 1.0f;

ret.refractionChance = 0.0f;

ret.refractionRoughness = 0.0f;

ret.refractionColor = vec3(0.0f, 0.0f, 0.0f);

return ret;

}

Every time i set material info (like, every time a ray intersection passes), i first call this function, and then set the specific values I care about. I also call it on the SRayHitInfo struct itself when i first declare it in GetColorForRay().

Next, also add a bool “fromInside” to the SRayHitInfo. We are going to need this to know when we intersect an object if we hit it from the inside, or the outside. Refraction made it so we can go inside of objects, and we need to know when that happens now.

struct SRayHitInfo

{

bool fromInside;

float dist;

vec3 normal;

SMaterialInfo material;

};

In TestQuadTrace(), where it modifies the ray hit info, make sure and set fromInside to false. We are going to say there is no inside to a quad. You could make it so the negative side of the quad (based on it’s normal) was inside, and maybe build boxes etc out of quads, but I decided not to deal with that complexity.

TestSphereTrace() already has the concept of whether the ray hit the sphere from the inside or not, but it doesn’t expose it to the caller. I just have it set the ray hit info fromInside bool based on that fromInside bool that already exists.

The various uses of roughness and percentSpecular are going to need to change to specularRoughness and specularChance.

The final shading inside the bounce for loop changes quite a bit so i’m going to just paste this below, but it is commented pretty heavily so hopefully makes sense.

The biggest thing worth explaining I think is how absorption happens. We don’t know how much absorption is going to happen when we enter an object because we don’t know how far the ray will travel through the object. Because of this, we can’t change the throughput to account for absorption when entering an object. We need to instead wait until we hit the far side of the object and then can calculate absorption and update the throughput to account for it. Another way of looking at this is that “when we hit an object from the inside, it means we should calculate and apply absorption”. This also handles the case of where a ray might bounce around inside of an object multiple times before leaving (due to specular reflection and fresnel happening INSIDE an object) – absorption would be calculated and applied at each internal specular bounce.

for (int bounceIndex = 0; bounceIndex < = c_numBounces; ++bounceIndex)

{

// shoot a ray out into the world

SRayHitInfo hitInfo;

hitInfo.material = GetZeroedMaterial();

hitInfo.dist = c_superFar;

hitInfo.fromInside = false;

TestSceneTrace(rayPos, rayDir, hitInfo);

// if the ray missed, we are done

if (hitInfo.dist == c_superFar)

{

ret += SRGBToLinear(texture(iChannel1, rayDir).rgb) * c_skyboxBrightnessMultiplier * throughput;

break;

}

// do absorption if we are hitting from inside the object

if (hitInfo.fromInside)

throughput *= exp(-hitInfo.material.refractionColor * hitInfo.dist);

// get the pre-fresnel chances

float specularChance = hitInfo.material.specularChance;

float refractionChance = hitInfo.material.refractionChance;

//float diffuseChance = max(0.0f, 1.0f - (refractionChance + specularChance));

// take fresnel into account for specularChance and adjust other chances.

// specular takes priority.

// chanceMultiplier makes sure we keep diffuse / refraction ratio the same.

float rayProbability = 1.0f;

if (specularChance > 0.0f)

{

specularChance = FresnelReflectAmount(

hitInfo.fromInside ? hitInfo.material.IOR : 1.0,

!hitInfo.fromInside ? hitInfo.material.IOR : 1.0,

rayDir, hitInfo.normal, hitInfo.material.specularChance, 1.0f);

float chanceMultiplier = (1.0f - specularChance) / (1.0f - hitInfo.material.specularChance);

refractionChance *= chanceMultiplier;

//diffuseChance *= chanceMultiplier;

}

// calculate whether we are going to do a diffuse, specular, or refractive ray

float doSpecular = 0.0f;

float doRefraction = 0.0f;

float raySelectRoll = RandomFloat01(rngState);

if (specularChance > 0.0f && raySelectRoll < specularChance)

{

doSpecular = 1.0f;

rayProbability = specularChance;

}

else if (refractionChance > 0.0f && raySelectRoll < specularChance + refractionChance)

{

doRefraction = 1.0f;

rayProbability = refractionChance;

}

else

{

rayProbability = 1.0f - (specularChance + refractionChance);

}

// numerical problems can cause rayProbability to become small enough to cause a divide by zero.

rayProbability = max(rayProbability, 0.001f);

// update the ray position

if (doRefraction == 1.0f)

{

rayPos = (rayPos + rayDir * hitInfo.dist) - hitInfo.normal * c_rayPosNormalNudge;

}

else

{

rayPos = (rayPos + rayDir * hitInfo.dist) + hitInfo.normal * c_rayPosNormalNudge;

}

// Calculate a new ray direction.

// Diffuse uses a normal oriented cosine weighted hemisphere sample.

// Perfectly smooth specular uses the reflection ray.

// Rough (glossy) specular lerps from the smooth specular to the rough diffuse by the material roughness squared

// Squaring the roughness is just a convention to make roughness feel more linear perceptually.

vec3 diffuseRayDir = normalize(hitInfo.normal + RandomUnitVector(rngState));

vec3 specularRayDir = reflect(rayDir, hitInfo.normal);

specularRayDir = normalize(mix(specularRayDir, diffuseRayDir, hitInfo.material.specularRoughness*hitInfo.material.specularRoughness));

vec3 refractionRayDir = refract(rayDir, hitInfo.normal, hitInfo.fromInside ? hitInfo.material.IOR : 1.0f / hitInfo.material.IOR);

refractionRayDir = normalize(mix(refractionRayDir, normalize(-hitInfo.normal + RandomUnitVector(rngState)), hitInfo.material.refractionRoughness*hitInfo.material.refractionRoughness));

rayDir = mix(diffuseRayDir, specularRayDir, doSpecular);

rayDir = mix(rayDir, refractionRayDir, doRefraction);

// add in emissive lighting

ret += hitInfo.material.emissive * throughput;

// update the colorMultiplier. refraction doesn't alter the color until we hit the next thing, so we can do light absorption over distance.

if (doRefraction == 0.0f)

throughput *= mix(hitInfo.material.albedo, hitInfo.material.specularColor, doSpecular);

// since we chose randomly between diffuse, specular, refract,

// we need to account for the times we didn't do one or the other.

throughput /= rayProbability;

// Russian Roulette

// As the throughput gets smaller, the ray is more likely to get terminated early.

// Survivors have their value boosted to make up for fewer samples being in the average.

{

float p = max(throughput.r, max(throughput.g, throughput.b));

if (RandomFloat01(rngState) > p)

break;

// Add the energy we 'lose' by randomly terminating paths

throughput *= 1.0f / p;

}

}

Orbit Camera

Now that we can do all these cool renders, it kind of sucks that the camera is stuck in one place.

It really is a lot of fun being able to move the camera around and look at things from different angles. It’s also really helpful in debugging – like when we were able to see the circle on the ceiling of the skybox!

Luckily an orbit camera is pretty easy to add!

First drop this function in the buffer A tab, right above the mainImage function. You can leave the constants there or put them into the common tab, whichever you’d rather do.

// mouse camera control parameters

const float c_minCameraAngle = 0.01f;

const float c_maxCameraAngle = (c_pi - 0.01f);

const vec3 c_cameraAt = vec3(0.0f, 0.0f, 20.0f);

const float c_cameraDistance = 20.0f;

void GetCameraVectors(out vec3 cameraPos, out vec3 cameraFwd, out vec3 cameraUp, out vec3 cameraRight)

{

// if the mouse is at (0,0) it hasn't been moved yet, so use a default camera setup

vec2 mouse = iMouse.xy;

if (dot(mouse, vec2(1.0f, 1.0f)) == 0.0f)

{

cameraPos = vec3(0.0f, 0.0f, -c_cameraDistance);

cameraFwd = vec3(0.0f, 0.0f, 1.0f);

cameraUp = vec3(0.0f, 1.0f, 0.0f);

cameraRight = vec3(1.0f, 0.0f, 0.0f);

return;

}

// otherwise use the mouse position to calculate camera position and orientation

float angleX = -mouse.x * 16.0f / float(iResolution.x);

float angleY = mix(c_minCameraAngle, c_maxCameraAngle, mouse.y / float(iResolution.y));

cameraPos.x = sin(angleX) * sin(angleY) * c_cameraDistance;

cameraPos.y = -cos(angleY) * c_cameraDistance;

cameraPos.z = cos(angleX) * sin(angleY) * c_cameraDistance;

cameraPos += c_cameraAt;

cameraFwd = normalize(c_cameraAt - cameraPos);

cameraRight = normalize(cross(vec3(0.0f, 1.0f, 0.0f), cameraFwd));

cameraUp = normalize(cross(cameraFwd, cameraRight));

}

The mainImage function also changes quite a bit between the seeding of rng and the raytracing – basically the stuff that controls jitter for TAA and calculates the camera vectors. The whole function is below, hopefully well enough commented to understand.

void mainImage( out vec4 fragColor, in vec2 fragCoord )

{

// initialize a random number state based on frag coord and frame

uint rngState = uint(uint(fragCoord.x) * uint(1973) + uint(fragCoord.y) * uint(9277) + uint(iFrame) * uint(26699)) | uint(1);

// calculate subpixel camera jitter for anti aliasing

vec2 jitter = vec2(RandomFloat01(rngState), RandomFloat01(rngState)) - 0.5f;

// get the camera vectors

vec3 cameraPos, cameraFwd, cameraUp, cameraRight;

GetCameraVectors(cameraPos, cameraFwd, cameraUp, cameraRight);

vec3 rayDir;

{

// calculate a screen position from -1 to +1 on each axis

vec2 uvJittered = (fragCoord+jitter)/iResolution.xy;

vec2 screen = uvJittered * 2.0f - 1.0f;

// adjust for aspect ratio

float aspectRatio = iResolution.x / iResolution.y;

screen.y /= aspectRatio;

// make a ray direction based on camera orientation and field of view angle

float cameraDistance = tan(c_FOVDegrees * 0.5f * c_pi / 180.0f);

rayDir = vec3(screen, cameraDistance);

rayDir = normalize(mat3(cameraRight, cameraUp, cameraFwd) * rayDir);

}

// raytrace for this pixel

vec3 color = vec3(0.0f, 0.0f, 0.0f);

for (int index = 0; index 0.1);

// average the frames together

vec4 lastFrameColor = texture(iChannel0, fragCoord / iResolution.xy);

float blend = (iFrame 0.0 || lastFrameColor.a == 0.0f || spacePressed) ? 1.0f : 1.0f / (1.0f + (1.0f / lastFrameColor.a));

color = mix(lastFrameColor.rgb, color, blend);

// show the result

fragColor = vec4(color, blend);

}

After you’ve done that, you can drag the left mouse in the image to move the camera around 🙂

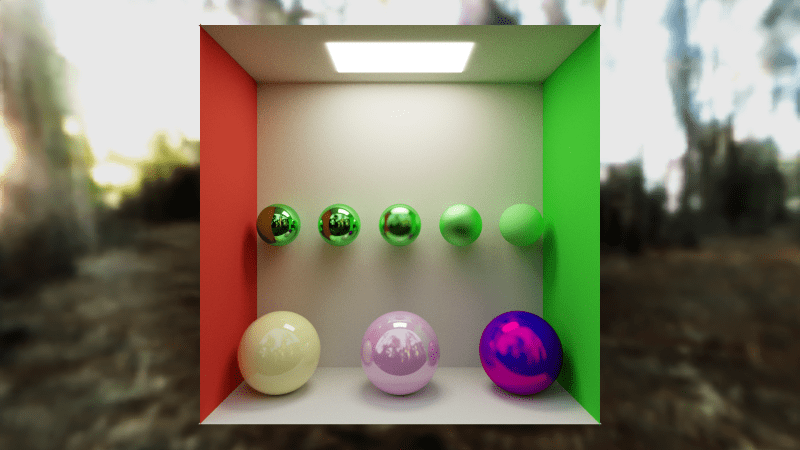

If you follow these steps with the scene from last post, and change the central sphere to be a bit refractive, you can finally view the box from a different angle!

Closing

Before we go, I want to show some refractive spheres with IOR 1, refraction roughness 0, but fading between a purely refractive surface on the left, to a purely diffuse surface on the right. This is SCENE define value 5 in the shadertoy.

Compare this one to the purely absorption scene shown before, and also the purely roughness scene.

All of these 3 obscured what was behind them more as the effect was turned up. All of them also could have the effect partially in effect, to any level.

So, all 3 of these examples were semi transparent.

Which of these is it we are talking about when we are doing traditional alpha blending in a rasterized scene?

Well it turns out to be a bit ambiguous.

If the shadow of a semi transparent object is colored, that means absorption is the best model for it. If the shadow of a semi transparent object is just darkened, the partially diffuse surface may be the best model (could also be absorption though). If the object doesn’t cast a shadow (or barely does), it is an object made semi transparent by roughness on the surface of the object, or INSIDE the object (we’ll talk about that case later). That is especially true if the appearance (lighting) of the object changes significantly when the lighting behind it (or around it) changes, vs an object which is only lit from the front.

Kind of a strange thing thinking about alpha as all these different effects, but good to be aware of it too if doing realistic rendering.

Who knew transparency would be so opaque?

Oh PS – a color tip I got from an artist once was to never use pure primary colors (I think it was Ron Harvey @ monolith. If you are reading this Ron, hello!). This is nice from an artistic standpoint, but also, if you think about how lighting is multiplied by these various colors, whenever you make any color channel 0, it means none of that color channel will make it through that multiplication. Completely killing light of any kind feels wrong and does make things look subtly wrong (or not subtly!), because nothing in the real world is so perfect or pure that it doesn’t reflect SOME light of a different frequency than it’s main frequency or frequencies. If it were so impossibly pure, dust would fall on it and change that. Also, it’s probably a good idea to stay away from 1 for similar reasons – nothing perfectly reflects anything in the real world, there is always some imperfection – again, if it were, dust would fall on it and change it.

I think volumetrics and depth of field are next 🙂

Thanks for reading, and i’d love to see anything you make. You can find me at @Atrix256

Hi there!

Awesome series of posts. Always wanted to learn path tracing, but never had the time. Part 1 really piqued my curiosity, and since it presented the basics in a really clear, concise and effective way I couldn’t help but follow it. Parts 2 and 3 are just as good.

Just so you’d know, I’ve ported your code (up to part 2) to Unity, adding camera and material system support, so that more people can play with it in a familiar environment. You can find it here: https://forum.unity.com/threads/free-toy-gpu-pathtracer.910655/

I’ll soon add part 3, as well as basic texture coordinates generation for spheres/quads and albedo/normal maps.

If you take requests, could you go over next event estimation (direct light sampling) sometime? looks like a relatively elegant way of decoupling direct/indirect lighting and improving convergence speed, but the specifics are a bit over my head.

Keep up the great work! Also, let me know if there’s a way I can buy you a coffee or two 🙂

LikeLike

Wow that is rad. Im going to share your awesome work on twitter. If you are over there hit me up @atrix256 so I can give you credit 🙂

LikeLike

To second arkano22: this is a really great series of posts. I wouldn’t have imagined it was possible to go from “my first shader” to path traced refraction in any meaningful way in 3 blog posts, but it turns out *you* can. This was more understandable and inspiring than any other intro I’ve seen. Just enough info + working code + great-looking results. Thanks!

Now, is there any chance you might do that “future post” about fog, smoke etc?

LikeLike

What’s the reason specularRayDir is lerping using the diffuseRayDir while refractionRayDir is lerping using a new cosine weighted direction? (I mean, why there’s no symmetry?)

If it’s for saving a little bit more ALU in the specular case – can’t we just lerp to -diffuseRayDir, which is a legit cosine weighted direction on the other hemisphere (facing “inside”)?

LikeLike

Yeah I think that ought to work fine. No specific reason for it to be the way it is.

LikeLike

Complete shadertoy cornellbox demo: https://www.shadertoy.com/view/wl3yz2

LikeLike

Hi! Great series of tutorials!

I’m fascinated with rayTrscing and it’s implementation via path Tracing.

Just let you know I’ve ported your code (part 1 and 2) into Cycling ‘74 Max (Jitter) and add some modifications, like camera movement and other stuff.

I’ve some screenshots here. I’ve managed also to incorporate a Cornell Box with 80 procedural generated spheres (courtesy of other Max programmer) that have albedo, emissive, reflections, IOR properties.

https://share.icloud.com/photos/0caTUepkZsA0TWOLyWarYiBlghttps://share.icloud.com/photos/088UO-zibIuxZ0iz7IkSe586A

I’ll soon add part 3, also I’m working in porting Peter Shirley’s RIOW examples.

I’m trying to organize all these material in order to generate my own version of the code.

Currently I’m having issues trying to incorporate depth of field and motion blur into the scene.

Do you take requests? Could you go over depth of field implementation?

I tried to reach you in Twitter.

Again, great work! Also, let me know if there’s a way I can contribute with your work in any way.

Many thanks!

LikeLike

Here’s a neat runtime friendly depth of field effect

https://blog.voxagon.se/2018/05/04/bokeh-depth-of-field-in-single-pass.html

Here’s a path tracing depth of field resource

https://blog.demofox.org/2018/07/04/pathtraced-depth-of-field-bokeh/

LikeLike

Many thanks for the super quick reply!

I’ll check it out those resources and try to implement it in the code.

Have a great weekend!

LikeLike

You too! I’m not sure why twitter contact didn’t work. Sorry if I missed it somehow! Mastodon seems to work better l. My mastodon link is in my twitter profile. Ttyl!

LikeLike

Cool! I’ll try to contact you in Mastodon. I’m fairly new to the app. I’ve to search how to send DM 😊

Many thanks!

LikeLike

Nice set of methods that give good results without ending up nose deep in microfacet theory. Part 2 stuff has been implemented in the Chunky minecraft app which I use avidly, I’m currently working on adding some of the Part 3 stuff. I noticed that the lerping the specular reflection and refraction directions with a random vector produces a direction bias. Is there a simple way to correct for that bias or is it sound under a more complex model?

LikeLike

I dont know of one, but I don’t know of a reason there couldn’t be one

LikeLike

neat set of methods without ending up nose deep in microfacet models. I noticed that lerping with a random direction introduces a direction bias for both reflection and refraction. Is there a good way to correct for this bias?

LikeLike

Does this implementation of Russian Roulette introduce bias for rays with max(throughput) > 1.0?

These rays never get terminated but we still normalize by p loosing energy…

LikeLike

Yeah I think you are right. Emission could make a ray biased. Im not sure how this is normally dealt with, do you know?

LikeLike

throughput /= rayProbability;

max(throughput) > 1.0 happens whenever rayProbability is smaller than current throughput. E.g a very low specular chance (0.01) and a normal throughput like 0.5 => 0.5 / 0.01 = 5. No emission involved.

Looks like people just clamp p to 1.0 which is equivalent to doing no RR for these rays. In some sense your method is nice because it avoids fireflies at the cost of visible image difference.

LikeLike

Ok looking at this more I believe I found a proper fix.

First of all: Adding a clamp like I described above to the current code only adds more problems. Imagine we have albedo = 1.0 and a low SpecularChance = 0.01. Using the current throughput /= rayProbability; that means we’ll add energy from nowhere and now there is no way to normalize it.

Now the fix: We are choosing the ray type directly using the probabilities of the material description so we are perfectly importance sampling that distribution. Which means the pdf (rayProbability) is actually 1.0 and can be omitted. This means throughput will never go above 1.0 and the current RR is fine as is.

Btw the same principle applies to the BRDF sampling. This code never introduces the concept of a BRDF. There is no function “SampleSpecularBrdf(out float pdf)” etc. We just shoot rays in whatever direction. What happens is that the BRDF becomes implicitly defined by how we shoot rays; perfectly importance sampling it with PDF=1.0. So it works the other way arround from usually.

LikeLike

Thanks for taking the time to think this through and explain it.

LikeLike